How Many Questions Of AI-100 Practice

Master the AI-100 Designing and Implementing an Azure AI Solution content and be ready for exam day success quickly with this Testking AI-100 free exam questions. We guarantee it!We make it a reality and give you real AI-100 questions in our Microsoft AI-100 braindumps.Latest 100% VALID Microsoft AI-100 Exam Questions Dumps at below page. You can use our Microsoft AI-100 braindumps and pass your exam.

Online AI-100 free questions and answers of New Version:

NEW QUESTION 1

You are developing a Computer Vision application.

You plan to use a workflow that will load data from an on-premises database to Azure Blob storage, and then connect to an Azure Machine Learning service.

What should you use to orchestrate the workflow?

- A. Azure Kubernetes Service (AKS)

- B. Azure Pipelines

- C. Azure Data Factory

- D. Azure Container Instances

Answer: C

Explanation:

With Azure Data Factory you can use workflows to orchestrate data integration and data transformation processes at scale.

Build data integration, and easily transform and integrate big data processing and machine learning with the

visual interface. References:

https://azure.microsoft.com/en-us/services/data-factory/

NEW QUESTION 2

You are designing a solution that will ingest temperature data from loT devices, calculate the average temperature, and then take action based on the aggregated data. The solution must meet the following requirements:

•Minimize the amount of uploaded data.

• Take action based on the aggregated data as quickly as possible.

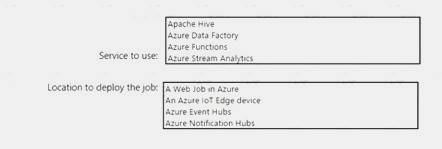

What should you include in the solution? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

Box 1: Azure Functions

Azure Function is a (serverless) service to host functions (little piece of code) that can be used for e. g. event driven applications.

General rule is always difficult since everything depends on your requirement but if you have to analyze a data stream, you should take a look at Azure Stream Analytics and if you want to implement something like a serverless event driven or timer-based application, you should check Azure Function or Logic Apps.

Note: Azure IoT Edge allows you to deploy complex event processing, machine learning, image recognition, and other high value AI without writing it in-house. Azure services like Azure Functions, Azure Stream Analytics, and Azure Machine Learning can all be run on-premises via Azure IoT Edge.

Box 2: An Azure IoT Edge device

Azure IoT Edge moves cloud analytics and custom business logic to devices so that your organization can focus on business insights instead of data management.

References:

https://docs.microsoft.com/en-us/azure/iot-edge/about-iot-edge

NEW QUESTION 3

Your company plans to deploy an AI solution that processes IoT data in real-time.

You need to recommend a solution for the planned deployment that meets the following requirements: Sustain up to 50 Mbps of events without throttling.

Retain data for 60 days.

What should you recommend?

- A. Apache Kafka

- B. Microsoft Azure IoT Hub

- C. Microsoft Azure Data Factory

- D. Microsoft Azure Machine Learning

Answer: A

Explanation:

Apache Kafka is an open-source distributed streaming platform that can be used to build real-time streaming data pipelines and applications.

References:

https://docs.microsoft.com/en-us/azure/hdinsight/kafka/apache-kafka-introduction

NEW QUESTION 4

Your company has a data team of Transact-SQL experts.

You plan to ingest data from multiple sources into Azure Event Hubs.

You need to recommend which technology the data team should use to move and query data from Event Hubs to Azure Storage. The solution must leverage the data team’s existing skills.

What is the best recommendation to achieve the goal? More than one answer choice may achieve the goal.

- A. Azure Notification Hubs

- B. Azure Event Grid

- C. Apache Kafka streams

- D. Azure Stream Analytics

Answer: B

Explanation:

Event Hubs Capture is the easiest way to automatically deliver streamed data in Event Hubs to an Azure Blob storage or Azure Data Lake store. You can subsequently process and deliver the data to any other storage destinations of your choice, such as SQL Data Warehouse or Cosmos DB.

You to capture data from your event hub into a SQL data warehouse by using an Azure function triggered by an event grid.

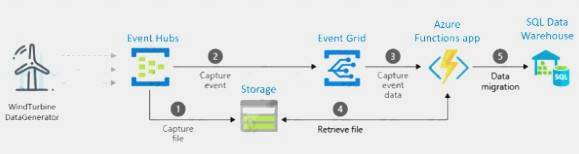

Example:

First, you create an event hub with the Capture feature enabled and set an Azure blob storage as the destination. Data generated by WindTurbineGenerator is streamed into the event hub and is automatically captured into Azure Storage as Avro files.

Next, you create an Azure Event Grid subscription with the Event Hubs namespace as its source and the Azure Function endpoint as its destination.

Whenever a new Avro file is delivered to the Azure Storage blob by the Event Hubs Capture feature, Event Grid notifies the Azure Function with the blob URI. The Function then migrates data from the blob to a SQL data warehouse.

References:

https://docs.microsoft.com/en-us/azure/event-hubs/store-captured-data-data-warehouse

NEW QUESTION 5

You are designing an AI solution in Azure that will perform image classification.

You need to identify which processing platform will provide you with the ability to update the logic over time. The solution must have the lowest latency for inferencing without having to batch.

Which compute target should you identify?

- A. graphics processing units (GPUs)

- B. field-programmable gate arrays (FPGAs)

- C. central processing units (CPUs)

- D. application-specific integrated circuits (ASICs)

Answer: B

Explanation:

FPGAs, such as those available on Azure, provide performance close to ASICs. They are also flexible and reconfigurable over time, to implement new logic.

NEW QUESTION 6

You need to design the Butler chatbot solution to meet the technical requirements.

What is the best channel and pricing tier to use? More than one answer choice may achieve the goal Select the BEST answer.

- A. standard channels that use the S1 pricing tier

- B. standard channels that use the Free pricing tier

- C. premium channels that use the Free pricing tier

- D. premium channels that use the S1 pricing tier

Answer: D

Explanation:

References:

https://azure.microsoft.com/en-in/pricing/details/bot-service/

NEW QUESTION 7

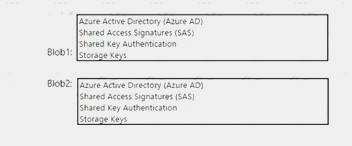

You plan to deploy an application that will perform image recognition. The application will store image data in two Azure Blob storage stores named Blob! and Blob2. You need to recommend a security solution that meets the following requirements:

•Access to Blobl must be controlled by a using a role.

•Access to Blob2 must be time-limited and constrained to specific operations.

What should you recommend using to control access to each blob store? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-us/azure/storage/common/storage-auth

NEW QUESTION 8

Your company has a data team of Scala and R experts.

You plan to ingest data from multiple Apache Kafka streams.

You need to recommend a processing technology to broker messages at scale from the Kafka streams to Azure Storage.

What should you recommend?

- A. Azure Databricks

- B. Azure Functions

- C. Azure HDInsight with Apache Storm

- D. Azure HDInsight with Microsoft Machine Learning Server

Answer: C

Explanation:

References:

https://docs.microsoft.com/en-us/azure/hdinsight/hdinsight-streaming-at-scale-overview?toc=https%3A%2F%2

NEW QUESTION 9

You are designing an AI solution that will analyze millions of pictures.

You need to recommend a solution for storing the pictures. The solution must minimize costs. Which storage solution should you recommend?

- A. an Azure Data Lake store

- B. Azure File Storage

- C. Azure Blob storage

- D. Azure Table storage

Answer: C

Explanation:

Data Lake will be a bit more expensive although they are in close range of each other. Blob storage has more options for pricing depending upon things like how frequently you need to access your data (cold vs hot storage).

References:

http://blog.pragmaticworks.com/azure-data-lake-vs-azure-blob-storage-in-data-warehousing

NEW QUESTION 10

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are deploying an Azure Machine Learning model to an Azure Kubernetes Service (AKS) container. You need to monitor the accuracy of each run of the model.

Solution: You modify the scoring file. Does this meet the goal?

- A. Yes

- B. No

Answer: B

NEW QUESTION 11

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question, you will NOT be able to return to it. As a result, these questions will not

appear in the review screen.

You have Azure IoT Edge devices that generate streaming data.

On the devices, you need to detect anomalies in the data by using Azure Machine Learning models. Once an anomaly is detected, the devices must add information about the anomaly to the Azure IoT Hub stream. Solution: You deploy Azure Functions as an IoT Edge module.

Does this meet the goal?

- A. Yes

- B. No

Answer: B

Explanation:

Instead use Azure Stream Analytics and REST API.

Note. Available in both the cloud and Azure IoT Edge, Azure Stream Analytics offers built-in machine learning based anomaly detection capabilities that can be used to monitor the two most commonly occurring anomalies: temporary and persistent.

Stream Analytics supports user-defined functions, via REST API, that call out to Azure Machine Learning endpoints.

References:

https://docs.microsoft.com/en-us/azure/stream-analytics/stream-analytics-machine-learning-anomaly-detection

NEW QUESTION 12

You are designing an Al application that will perform real-time processing by using Microsoft Azure Stream Analytics.

You need to identify the valid outputs of a Stream Analytics job.

What are three possible outputs? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

- A. a Hive table in Azure HDInsight

- B. Azure SQL Database

- C. Azure Cosmos DB

- D. Azure Blob storage

- E. Azure Redis Cache

Answer: BCD

Explanation:

References:

https://docs.microsoft.com/en-us/azure/stream-analytics/stream-analytics-define-outputs

NEW QUESTION 13

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are developing an application that uses an Azure Kubernetes Service (AKS) cluster. You are troubleshooting a node issue.

You need to connect to an AKS node by using SSH.

Solution: You add an SSH key to the node, and then you create an SSH connection. Does this meet the goal?

- A. Yes

- B. No

Answer: A

Explanation:

By default, SSH keys are generated when you create an AKS cluster. If you did not specify your own SSH keys when you created your AKS cluster, add your public SSH keys to the AKS nodes.

You also need to create an SSH connection to the AKS node. References:

https://docs.microsoft.com/en-us/azure/aks/ssh

NEW QUESTION 14

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You create several Al models in Azure Machine Learning Studio.

You deploy the models to a production environment.

You need to monitor the compute performance of the models. Solution: You enable Model data collection.

Does this meet the goal?

- A. Yes

- B. No

Answer: A

Explanation:

You need to enable Model data collection. References:

https://docs.microsoft.com/en-us/azure/machine-learning/service/how-to-enable-data-collection

NEW QUESTION 15

You plan to deploy an Al solution that tracks the behavior of 10 custom mobile apps. Each mobile app has several thousand users. You need to recommend a solution for real-time data ingestion for the data originating from the mobile app users. Which Microsoft Azure service should you include in the recommendation?

- A. Azure Event Hubs

- B. Azure Service Bus queues

- C. Azure Service Bus topics and subscriptions

- D. Apache Storm on Azure HDInsight

Answer: A

Explanation:

References:

https://docs.microsoft.com/en-in/azure/event-hubs/event-hubs-about

NEW QUESTION 16

You have an intelligent edge solution that processes data and outputs the data to an Azure Cosmos DB account that uses the SQL API.

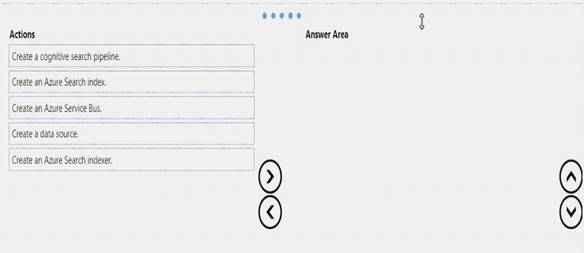

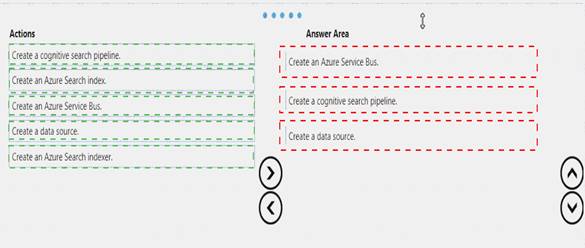

You need to ensure that you can perform full text searches of the data.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 17

You deploy an infrastructure for a big data workload.

You need to run Azure HDInsight and Microsoft Machine Learning Server. You plan to set the RevoScaleR compute contexts to run rx function calls in parallel.

What are three compute contexts that you can use for Machine Learning Server? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

- A. SQL

- B. Spark

- C. local parallel

- D. HBase

- E. local sequential

Answer: ABC

Explanation:

Remote computing is available for specific data sources on selected platforms. The following tables document the supported combinations.

RxInSqlServer, sqlserver: Remote compute context. Target server is a single database node (SQL Server 2021 R Services or SQL Server 2021 Machine Learning Services). Computation is parallel, but not distributed.

RxSpark, spark: Remote compute context. Target is a Spark cluster on Hadoop.

RxLocalParallel, localpar: Compute context is often used to enable controlled, distributed computations relying on instructions you provide rather than a built-in scheduler on Hadoop. You can use compute context for manual distributed computing.

References:

https://docs.microsoft.com/en-us/machine-learning-server/r/concept-what-is-compute-context

NEW QUESTION 18

You deploy an application that performs sentiment analysis on the data stored in Azure Cosmos DB.

Recently, you loaded a large amount of data to the database. The data was for a customer named Contoso. Ltd. You discover that queries for the Contoso data are slow to complete, and the queries slow the entire

application.

You need to reduce the amount of time it takes for the queries to complete. The solution must minimize costs. What is the best way to achieve the goal? More than one answer choice may achieve the goal. Select the BEST answer.

- A. Change the requests units.

- B. Change the partitioning strategy.

- C. Change the transaction isolation level.

- D. Migrate the data to the Cosmos DB database.

Answer: B

Explanation:

References:

https://docs.microsoft.com/en-us/azure/architecture/best-practices/data-partitioning

NEW QUESTION 19

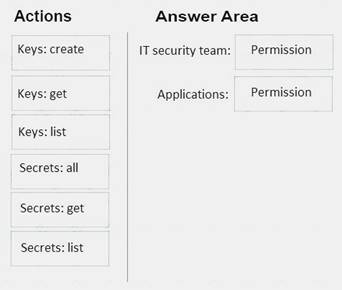

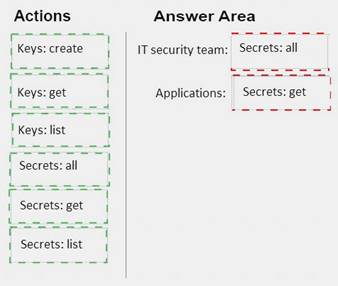

You use an Azure key vault to store credentials for several Azure Machine Learning applications. You need to configure the key vault to meet the following requirements: Ensure that the IT security team can add new passwords and periodically change the passwords.

Ensure that the IT security team can add new passwords and periodically change the passwords.  Ensure that the applications can securely retrieve the passwords for the applications.

Ensure that the applications can securely retrieve the passwords for the applications. Use the principle of least privilege.

Use the principle of least privilege.

Which permissions should you grant? To answer, drag the appropriate permissions to the correct targets. Each permission may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 20

You need to build a pipeline for an Azure Machine Learning experiment.

In which order should you perform the actions? To answer, move all actions from the list of actions to the answer area and arrange them in the correct order.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

References:

https://azure.microsoft.com/en-in/blog/experimentation-using-azure-machine-learning/ https://docs.microsoft.com/en-us/azure/machine-learning/studio-module-reference/machine-learning-modules

NEW QUESTION 21

......

P.S. Easily pass AI-100 Exam with 101 Q&As DumpSolutions.com Dumps & pdf Version, Welcome to Download the Newest DumpSolutions.com AI-100 Dumps: https://www.dumpsolutions.com/AI-100-dumps/ (101 New Questions)