Up To The Minute SCS-C01 Vce 2021

we provide Simulation Amazon-Web-Services SCS-C01 practice which are the best for clearing SCS-C01 test, and to get certified by Amazon-Web-Services AWS Certified Security- Specialty. The SCS-C01 Questions & Answers covers all the knowledge points of the real SCS-C01 exam. Crack your Amazon-Web-Services SCS-C01 Exam with latest dumps, guaranteed!

Online Amazon-Web-Services SCS-C01 free dumps demo Below:

NEW QUESTION 1

You need to inspect the running processes on an EC2 Instance that may have a security issue. How can you achieve this in the easiest way possible. Also you need to ensure that the process does not interfere with the continuous running of the instance.

Please select:

- A. Use AWS Cloudtrail to record the processes running on the server to an S3 bucket.

- B. Use AWS Cloudwatch to record the processes running on the server

- C. Use the SSM Run command to send the list of running processes information to an S3 bucket.

- D. Use AWS Config to see the changed process information on the server

Answer: C

Explanation:

The SSM Run command can be used to send OS specific commands to an Instance. Here you can check and see the running processes on an instance and then send the output to an S3 bucket.

Option A is invalid because this is used to record API activity and cannot be used to record running processes. Option B is invalid because Cloudwatch is a logging and metric service and cannot be used to record running processes.

Option D is invalid because AWS Config is a configuration service and cannot be used to record running processes.

For more information on the Systems Manager Run command, please visit the following URL:

https://docs.aws.amazon.com/systems-manaEer/latest/usereuide/execute-remote-commands.htmll

The correct answer is: Use the SSM Run command to send the list of running processes information to an S3 bucket. Submit your Feedback/Queries to our Experts

NEW QUESTION 2

The Security Engineer is managing a web application that processes highly sensitive personal information. The application runs on Amazon EC2. The application has strict compliance requirements, which instruct that all incoming traffic to the application is protected from common web exploits and that all outgoing traffic from the EC2 instances is restricted to specific whitelisted URLs.

Which architecture should the Security Engineer use to meet these requirements?

- A. Use AWS Shield to scan inbound traffic for web exploit

- B. Use VPC Flow Logs and AWS Lambda to restrict egress traffic to specific whitelisted URLs.

- C. Use AWS Shield to scan inbound traffic for web exploit

- D. Use a third-party AWS Marketplace solution to restrict egress traffic to specific whitelisted URLs.

- E. Use AWS WAF to scan inbound traffic for web exploit

- F. Use VPC Flow Logs and AWS Lambda to restrict egress traffic to specific whitelisted URLs.

- G. Use AWS WAF to scan inbound traffic for web exploit

- H. Use a third-party AWS Marketplace solution to restrict egress traffic to specific whitelisted URLs.

Answer: D

NEW QUESTION 3

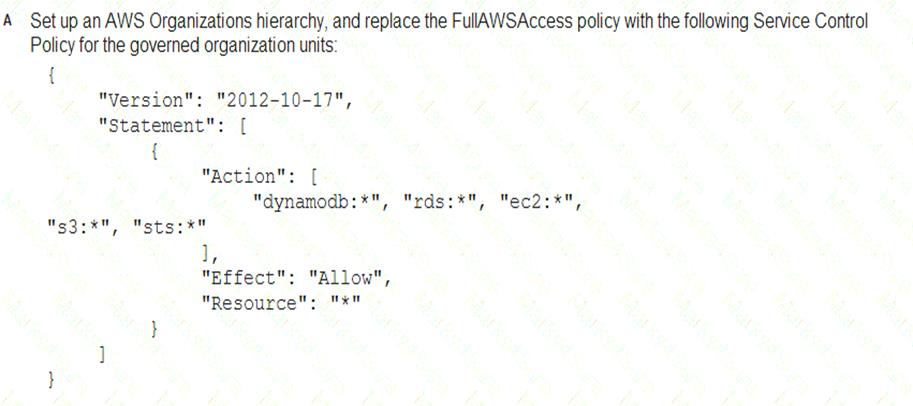

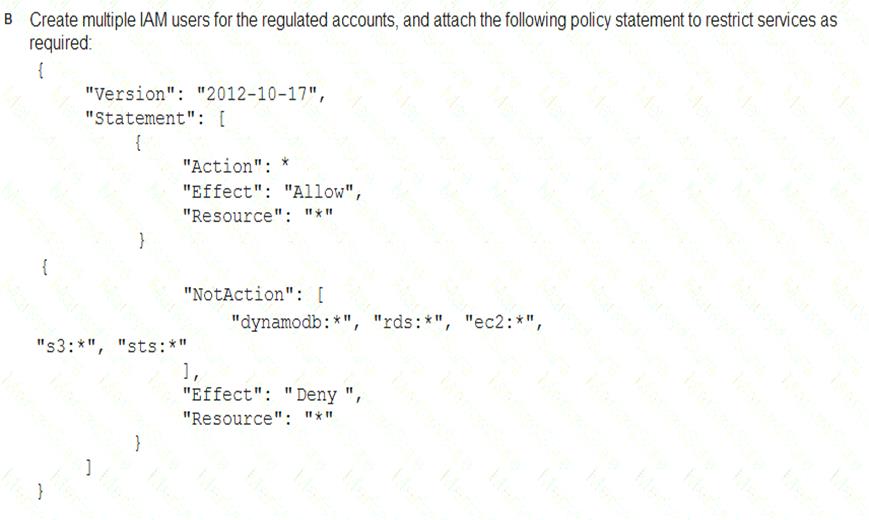

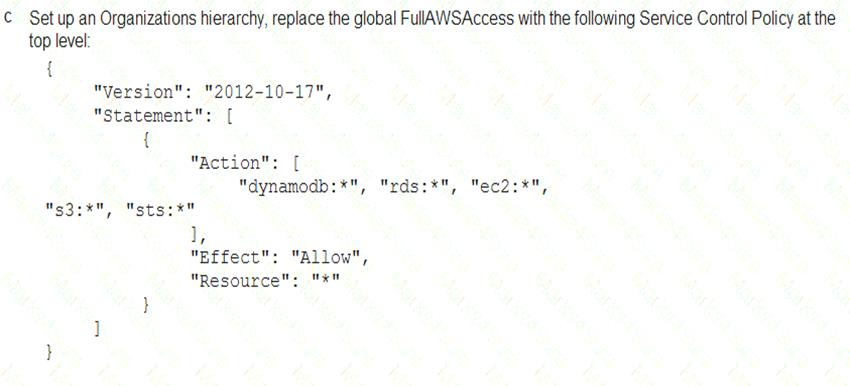

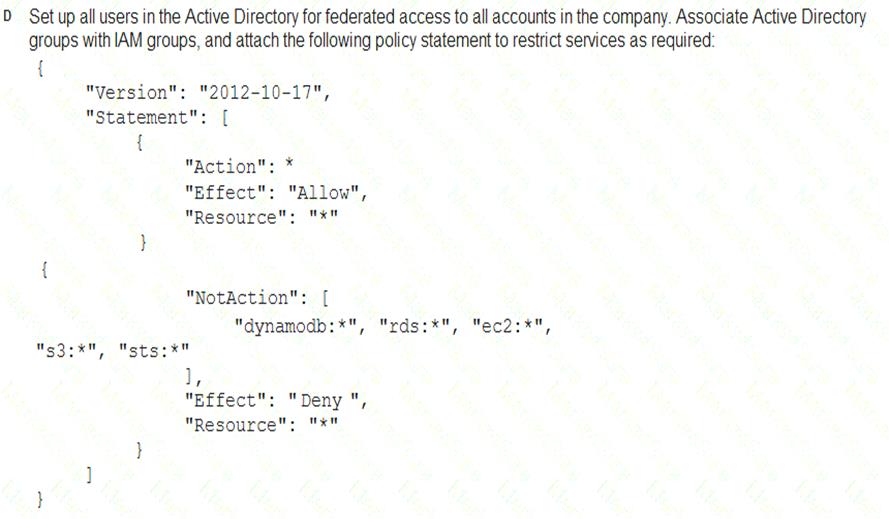

A Security Engineer must enforce the use of only Amazon EC2, Amazon S3, Amazon RDS, Amazon DynamoDB, and AWS STS in specific accounts.

What is a scalable and efficient approach to meet this requirement?

- A. Option A

- B. Option B

- C. Option C

- D. Option D

Answer: A

NEW QUESTION 4

Which of the following is the responsibility of the customer? Choose 2 answers from the options given below. Please select:

- A. Management of the Edge locations

- B. Encryption of data at rest

- C. Protection of data in transit

- D. Decommissioning of old storage devices

Answer: BC

Explanation:

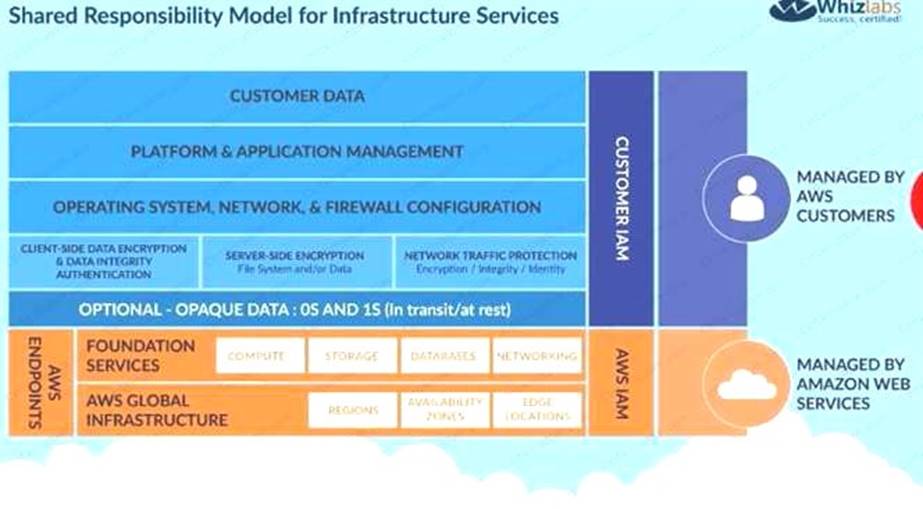

Below is the snapshot of the Shared Responsibility Model

C:UserswkDesktopmudassarUntitled.jpg

For more information on AWS Security best practises, please refer to below URL awsstatic corn/whitepapers/Security/AWS Practices.

The correct answers are: Encryption of data at rest Protection of data in transit Submit your Feedback/Queries to our Experts

NEW QUESTION 5

You have a web site that is sitting behind AWS Cloudfront. You need to protect the web site against threats such as SQL injection and Cross site scripting attacks. Which of the following service can help in such a scenario

Please select:

- A. AWS Trusted Advisor

- B. AWS WAF

- C. AWS Inspector

- D. AWS Config

Answer: B

Explanation:

The AWS Documentation mentions the following

AWS WAF is a web application firewall that helps detect and block malicious web requests targeted at your web applications. AWS WAF allows you to create rules that can help protect against common web exploits like SQL injection and cross-site scripting. With AWS WAF you first identify the resource (either an Amazon CloudFront distribution or an Application Load Balancer) that you need to protect.

Option A is invalid because this will only give advise on how you can better the security in your AWS account but not protect against threats mentioned in the question.

Option C is invalid because this can be used to scan EC2 Instances for vulnerabilities but not protect against threats mentioned in the question.

Option D is invalid because this can be used to check config changes but not protect against threats mentioned in the quest

For more information on AWS WAF, please visit the following URL: https://aws.amazon.com/waf/details;

The correct answer is: AWS WAF

Submit your Feedback/Queries to our Experts

NEW QUESTION 6

Development teams in your organization use S3 buckets to store the log files for various applications hosted ir development environments in AWS. The developers want to keep the logs for one month for troubleshooting purposes, and then purge the logs. What feature will enable this requirement?

Please select:

- A. Adding a bucket policy on the S3 bucket.

- B. Configuring lifecycle configuration rules on the S3 bucket.

- C. Creating an 1AM policy for the S3 bucket.

- D. Enabling CORS on the S3 bucket.

Answer: B

Explanation:

The AWS Documentation mentions the following on lifecycle policies

Lifecycle configuration enables you to specify the lifecycle management of objects in a bucket. The configuration is a set of one or more rules, where each rule defines an action for Amazon S3 to apply to a group of objects. These actions can be classified a« follows:

Transition actions - In which you define when objects transition to another . For example, you may choose to transition objects to the STANDARDJA (IA, for infrequent access) storage class 30 days after creation, or

archive objects to the GLACIER storage class one year after creation.

Expiration actions - In which you specify when the objects expire. Then Amazon S3 deletes the expired objects on your behalf.

Option A and C are invalid because neither bucket policies neither 1AM policy's can control the purging of logs Option D is invalid CORS is used for accessing objects across domains and not for purging of logs For more information on AWS S3 Lifecycle policies, please visit the following URL:

com/AmazonS3/latest/d<

The correct answer is: Configuring lifecycle configuration rules on the S3 bucket. Submit your Feedback/Queries to our Experts

NEW QUESTION 7

The Accounting department at Example Corp. has made a decision to hire a third-party firm, AnyCompany, to monitor Example Corp.'s AWS account to help optimize costs.

The Security Engineer for Example Corp. has been tasked with providing AnyCompany with access to the required Example Corp. AWS resources. The Engineer has created an IAM role and granted permission to AnyCompany's AWS account to assume this role.

When customers contact AnyCompany, they provide their role ARN for validation. The Engineer is concerned that one of AnyCompany's other customers might deduce Example Corp.'s role ARN and potentially compromise the company's account.

What steps should the Engineer perform to prevent this outcome?

- A. Create an IAM user and generate a set of long-term credential

- B. Provide the credentials to AnyCompany.Monitor access in IAM access advisor and plan to rotate credentials on a recurring basis.

- C. Request an external ID from AnyCompany and add a condition with sts:Externald to the role's trust policy.

- D. Require two-factor authentication by adding a condition to the role's trust policy with aws:MultiFactorAuthPresent.

- E. Request an IP range from AnyCompany and add a condition with aws:SourceIp to the role's trust policy.

Answer: B

NEW QUESTION 8

A Security Engineer must design a solution that enables the Incident Response team to audit for changes to a user’s IAM permissions in the case of a security incident.

How can this be accomplished?

- A. Use AWS Config to review the IAM policy assigned to users before and after the incident.

- B. Run the GenerateCredentialReport via the AWS CLI, and copy the output to Amazon S3 daily for auditing purposes.

- C. Copy AWS CloudFormation templates to S3, and audit for changes from the template.

- D. Use Amazon EC2 Systems Manager to deploy images, and review AWS CloudTrail logs for changes.

Answer: A

NEW QUESTION 9

You need to have a cloud security device which would allow to generate encryption keys based on FIPS 140-2 Level 3. Which of the following can be used for this purpose.

Please select:

- A. AWS KMS

- B. AWS Customer Keys

- C. AWS managed keys

- D. AWS Cloud HSM

Answer: AD

Explanation:

AWS Key Management Service (KMS) now uses FIPS 140-2 validated hardware security modules (HSM) and supports FIPS 140-2 validated endpoints, which provide independent assurances about the confidentiality and integrity of your keys.

All master keys in AWS KMS regardless of their creation date or origin are automatically protected using FIPS 140-2 validated

HSMs. defines four levels of security, simply named "Level 1'' to "Level 4". It does not specify in detail what level of security is required by any particular application.

• FIPS 140-2 Level 1 the lowest, imposes very limited requirements; loosely, all components must be "production-grade" anc various egregious kinds of insecurity must be absent

• FIPS 140-2 Level 2 adds requirements for physical tamper-evidence and role-based authentication.

• FIPS 140-2 Level 3 adds requirements for physical tamper-resistance (making it difficult for attackers to gain access to sensitive information contained in the module) and identity-based authentication, and for a physical or logical separation between the interfaces by which "critical security parameters" enter and leave the module, and its other interfaces.

• FIPS 140-2 Level 4 makes the physical security requirements more stringent and requires robustness against environmental attacks.

AWSCIoudHSM provides you with a FIPS 140-2 Level 3 validated single-tenant HSM cluster in your Amazon Virtual Private Cloud (VPQ to store and use your keys. You have exclusive control over how your keys are used via an authentication mechanism independent from AWS. You interact with keys in your AWS CloudHSM cluster similar to the way you interact with your applications running in Amazon EC2.

AWS KMS allows you to create and control the encryption keys used by your applications and supported AWS services in multiple regions around the world from a single console. The service uses a FIPS 140-2 validated HSM to protect the security of your keys. Centralized management of all your keys in AWS KMS lets you enforce who can use your keys under which conditions, when they get rotated, and who can manage them.

AWS KMS HSMs are validated at level 2 overall and at level 3 in the following areas:

• Cryptographic Module Specification

• Roles, Services, and Authentication

• Physical Security

• Design Assurance

So I think that we can have 2 answers for this question. Both A & D.

• https://aws.amazon.com/blo15s/security/aws-key-management-service- now-ffers-flps-140-2-validated-cryptographic-m<

enabling-easier-adoption-of-the-service-for-regulated-workloads/

• https://a ws.amazon.com/cloudhsm/faqs/

• https://aws.amazon.com/kms/faqs/

• https://en.wikipedia.org/wiki/RPS

The AWS Documentation mentions the following

AWS CloudHSM is a cloud-based hardware security module (HSM) that enables you to easily generate and use your own encryption keys on the AWS Cloud. With CloudHSM, you can manage your own encryption keys using FIPS 140-2 Level 3 validated HSMs. CloudHSM offers you the flexibility to integrate with your applications using industry-standard APIs, such as PKCS#11, Java Cryptography Extensions ()CE). and Microsoft CryptoNG (CNG) libraries. CloudHSM is also standards-compliant and enables you to export all of your keys to most other commercially-available HSMs. It is a fully-managed service that automates

time-consuming administrative tasks for you, such as hardware provisioning, software patching,

high-availability, and backups. CloudHSM also enables you to scale quickly by adding and removing HSM capacity on-demand, with no up-front costs.

All other options are invalid since AWS Cloud HSM is the prime service that offers FIPS 140-2 Level 3 compliance

For more information on CloudHSM, please visit the following url https://aws.amazon.com/cloudhsm;

The correct answers are: AWS KMS, AWS Cloud HSM Submit your Feedback/Queries to our Experts

NEW QUESTION 10

A company has Windows Amazon EC2 instances in a VPC that are joined to on-premises Active Directory servers for domain services. The security team has enabled Amazon GuardDuty on the AWS account to alert on issues with the instances.

During a weekly audit of network traffic, the Security Engineer notices that one of the EC2 instances is attempting to communicate with a known command-and-control server but failing. This alert does not show up in GuardDuty.

Why did GuardDuty fail to alert to this behavior?

- A. GuardDuty did not have the appropriate alerts activated.

- B. GuardDuty does not see these DNS requests.

- C. GuardDuty only monitors active network traffic flow for command-and-control activity.

- D. GuardDuty does not report on command-and-control activity.

Answer: C

NEW QUESTION 11

A Security Analyst attempted to troubleshoot the monitoring of suspicious security group changes. The Analyst was told that there is an Amazon CloudWatch alarm in place for these AWS CloudTrail log events. The Analyst tested the monitoring setup by making a configuration change to the security group but did not receive any alerts.

Which of the following troubleshooting steps should the Analyst perform?

- A. Ensure that CloudTrail and S3 bucket access logging is enabled for the Analyst's AWS accoun

- B. Verify that a metric filter was created and then mapped to an alar

- C. Check the alarm notification action.

- D. Check the CloudWatch dashboards to ensure that there is a metric configured with an appropriate dimension for security group changes.

- E. Verify that the Analyst's account is mapped to an IAM policy that includes permissions for cloudwatch: GetMetricStatistics and Cloudwatch: ListMetrics.

Answer: B

NEW QUESTION 12

An application has a requirement to be resilient across not only Availability Zones within the application’s primary region but also be available within another region altogether.

Which of the following supports this requirement for AWS resources that are encrypted by AWS KMS?

- A. Copy the application’s AWS KMS CMK from the source region to the target region so that it can be used to decrypt the resource after it is copied to the target region.

- B. Configure AWS KMS to automatically synchronize the CMK between regions so that it can be used to decrypt the resource in the target region.

- C. Use AWS services that replicate data across regions, and re-wrap the data encryption key created in the source region by using the CMK in the target region so that the target region’s CMK can decrypt the database encryption key.

- D. Configure the target region’s AWS service to communicate with the source region’s AWS KMS so that it can decrypt the resource in the target region.

Answer: C

NEW QUESTION 13

You have a vendor that needs access to an AWS resource. You create an AWS user account. You want to restrict access to the resource using a policy for just that user over a brief period. Which of the following would be an ideal policy to use?

Please select:

- A. An AWS Managed Policy

- B. An Inline Policy

- C. A Bucket Policy

- D. A bucket ACL

Answer: B

Explanation:

The AWS Documentation gives an example on such a case

Inline policies are useful if you want to maintain a strict one-to-one relationship between a policy and the principal entity that if s applied to. For example, you want to be sure that the permissions in a policy are not inadvertently assigned to a principal entity other than the one they're intended for. When you use an inline policy, the permissions in the policy cannot be inadvertently attached to the wrong principal entity. In addition, when you use the AWS Management Console to delete that principal entit the policies embedded in the principal entity are deleted as well. That's because they are part of the principal entity.

Option A is invalid because AWS Managed Polices are ok for a group of users, but for individual users, inline policies are better.

Option C and D are invalid because they are specifically meant for access to S3 buckets For more information on policies, please visit the following URL: https://docs.aws.amazon.com/IAM/latest/UserGuide/access managed-vs-inline

The correct answer is: An Inline Policy Submit your Feedback/Queries to our Experts

NEW QUESTION 14

Your team is experimenting with the API gateway service for an application. There is a need to implement a custom module which can be used for authentication/authorization for calls made to the API gateway. How can this be achieved?

Please select:

- A. Use the request parameters for authorization

- B. Use a Lambda authorizer

- C. Use the gateway authorizer

- D. Use CORS on the API gateway

Answer: B

Explanation:

The AWS Documentation mentions the following

An Amazon API Gateway Lambda authorizer (formerly known as a custom authorize?) is a Lambda function that you provide to control access to your API methods. A Lambda authorizer uses bearer token authentication strategies, such as OAuth or SAML. It can also use information described by headers, paths, query strings, stage variables, or context variables request parameters.

Options A,C and D are invalid because these cannot be used if you need a custom authentication/authorization for calls made to the API gateway

For more information on using the API gateway Lambda authorizer please visit the URL: https://docs.aws.amazon.com/apisateway/latest/developerguide/apieateway-use-lambda-authorizer.htmll The correct answer is: Use a Lambda authorizer

Submit your Feedback/Queries to our Experts

NEW QUESTION 15

Your company use AWS KMS for management of its customer keys. From time to time, there is a requirement to delete existing keys as part of housekeeping activities. What can be done during the deletion process to verify that the key is no longer being used.

Please select:

- A. Use CloudTrail to see if any KMS API request has been issued against existing keys

- B. Use Key policies to see the access level for the keys

- C. Rotate the keys once before deletion to see if other services are using the keys

- D. Change the 1AM policy for the keys to see if other services are using the keys

Answer: A

Explanation:

The AWS lentation mentions the following

You can use a combination of AWS CloudTrail, Amazon CloudWatch Logs, and Amazon Simple Notification Service (Amazon SNS) to create an alarm that notifies you of AWS KMS API requests that attempt to use a customer master key (CMK) that is pending deletion. If you receive a notification from such an alarm, you might want to cancel deletion of the CMK to give yourself more time to determine whether you want to delete it

Options B and D are incorrect because Key policies nor 1AM policies can be used to check if the keys are being used.

Option C is incorrect since rotation will not help you check if the keys are being used. For more information on deleting keys, please refer to below URL:

https://docs.aws.amazon.com/kms/latest/developereuide/deletine-keys-creatine-cloudwatch-alarm.html

The correct answer is: Use CloudTrail to see if any KMS API request has been issued against existing keys Submit your Feedback/Queries to our Experts

NEW QUESTION 16

You have been given a new brief from your supervisor for a client who needs a web application set up on AWS. The a most important requirement is that MySQL must be used as the database, and this database must not be hosted in t« public cloud, but rather at the client's data center due to security risks. Which of the following solutions would be the ^ best to assure that the client's requirements are met? Choose the correct answer from the options below

Please select:

- A. Build the application server on a public subnet and the database at the client's data cente

- B. Connect them with a VPN connection which uses IPsec.

- C. Use the public subnet for the application server and use RDS with a storage gateway to access and synchronize the data securely from the local data center.

- D. Build the application server on a public subnet and the database on a private subnet with a NAT instance between them.

- E. Build the application server on a public subnet and build the database in a private subnet with a secure ssh connection to the private subnet from the client's data center.

Answer: A

Explanation:

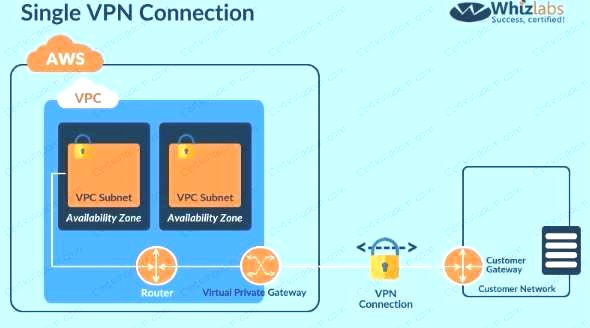

Since the database should not be hosted on the cloud all other options are invalid. The best option is to create a VPN connection for securing traffic as shown below. C:UserswkDesktopmudassarUntitled.jpg

Option B is invalid because this is the incorrect use of the Storage gateway Option C is invalid since this is the incorrect use of the NAT instance Option D is invalid since this is an incorrect configuration For more information on VPN connections, please visit the below URL

http://docs.aws.amazon.com/AmazonVPC/latest/UserGuide/VPC_VPN.htmll

The correct answer is: Build the application server on a public subnet and the database at the client's data center. Connect them with a VPN connection which uses IPsec

Submit your Feedback/Queries to our Experts

NEW QUESTION 17

You are building a system to distribute confidential training videos to employees. Using CloudFront, what method could be used to serve content that is stored in S3, but not publicly accessible from S3 directly?

Please select:

- A. Create an Origin Access Identity (OAI) for CloudFront and grant access to the objects in your S3 bucket to that OAl.

- B. Add the CloudFront account security group "amazon-cf/amazon-cf-sg" to the appropriate S3 bucket policy.

- C. Create an Identity and Access Management (1AM) User for CloudFront and grant access to the objects in your S3 bucket to that 1AM User.

- D. Create a S3 bucket policy that lists the CloudFront distribution ID as the Principal and the target bucket as the Amazon Resource Name (ARN).

Answer: A

Explanation:

You can optionally secure the content in your Amazon S3 bucket so users can access it through CloudFront but cannot access it directly by using Amazon S3 URLs. This prevents anyone from bypassing CloudFront and using the Amazon S3 URL to get content that you want to restrict access to. This step isn't required to use signed URLs, but we recommend it

To require that users access your content through CloudFront URLs, you perform the following tasks: Create a special CloudFront user called an origin access identity.

Give the origin access identity permission to read the objects in your bucket. Remove permission for anyone else to use Amazon S3 URLs to read the objects.

Option B,C and D are all automatically invalid, because the right way is to ensure to create Origin Access Identity (OAI) for CloudFront and grant access accordingly.

For more information on serving private content via Cloudfront, please visit the following URL: https://docs.aws.amazon.com/AmazonCloudFront/latest/DeveloperGuide/PrivateContent.htmll

The correct answer is: Create an Origin Access Identity (OAI) for CloudFront and grant access to the objects in your S3 bucket t that OAI.

You can optionally secure the content in your Amazon S3 bucket so users can access it through CloudFront but cannot access it directly by using Amazon S3 URLs. This prevents anyone from bypassing CloudFront and using the Amazon S3 URL to get content that you want to restrict access to. This step isn't required to use signed URLs, but we recommend it

To require that users access your content through CloudFront URLs, you perform the following tasks: Create a special CloudFront user called an origin access identity.

Give the origin access identity permission to read the objects in your bucket. Remove permission for anyone else to use Amazon S3 URLs to read the objects.

Option B,C and D are all automatically invalid, because the right way is to ensure to create Origin Access Identity (OAI) for CloudFront and grant access accordingly.

For more information on serving private content via Cloudfront, please visit the following URL: https://docs.aws.amazon.com/AmazonCloudFront/latest/DeveloperGuide/PrivateContent.htmll

The correct answer is: Create an Origin Access Identity (OAI) for CloudFront and grant access to the objects in your S3 bucket t that OAI.

Submit your Feedback/Queries to our Experts

Submit your Feedback/Queries to our Experts

NEW QUESTION 18

One of the EC2 Instances in your company has been compromised. What steps would you take to ensure that you could apply digital forensics on the Instance. Select 2 answers from the options given below

Please select:

- A. Remove the role applied to the Ec2 Instance

- B. Create a separate forensic instance

- C. Ensure that the security groups only allow communication to this forensic instance

- D. Terminate the instance

Answer: BC

Explanation:

Option A is invalid because removing the role will not help completely in such a situation

Option D is invalid because terminating the instance means that you cannot conduct forensic analysis on the instance

One way to isolate an affected EC2 instance for investigation is to place it in a Security Group that only the forensic investigators can access. Close all ports except to receive inbound SSH or RDP traffic from one single IP address from which the investigators can safely examine the instance.

For more information on security scenarios for your EC2 Instance, please refer to below URL: https://d1.awsstatic.com/Marketplace/scenarios/security/SEC 11 TSB Final.pd1

The correct answers are: Create a separate forensic instance. Ensure that the security groups only allow communication to this forensic instance

Submit your Feedback/Queries to our Experts

NEW QUESTION 19

A company hosts data in S3. There is now a mandate that going forward all data in the S3 bucket needs to encrypt at rest. How can this be achieved?

Please select:

- A. Use AWS Access keys to encrypt the data

- B. Use SSL certificates to encrypt the data

- C. Enable server side encryption on the S3 bucket

- D. Enable MFA on the S3 bucket

Answer: C

Explanation:

The AWS Documentation mentions the following

Server-side encryption is about data encryption at rest—that is, Amazon S3 encrypts your data at the object level as it writes it to disks in its data centers and decrypts it for you when you access it. As long as you authenticate your request and you have access permissions, there is no difference in the way you access encrypted or unencrypted objects.

Options A and B are invalid because neither Access Keys nor SSL certificates can be used to encrypt data. Option D is invalid because MFA is just used as an extra level of security for S3 buckets

For more information on S3 server side encryption, please refer to the below Link: https://docs.aws.amazon.com/AmazonS3/latest/dev/serv-side-encryption.html Submit your Feedback/Queries to our Experts

NEW QUESTION 20

A security team is responsible for reviewing AWS API call activity in the cloud environment for security violations. These events must be recorded and retained in a centralized location for both current and future AWS regions.

What is the SIMPLEST way to meet these requirements?

- A. Enable AWS Trusted Advisor security checks in the AWS Console, and report all security incidents for all regions.

- B. Enable AWS CloudTrail by creating individual trails for each region, and specify a single Amazon S3 bucket to receive log files for later analysis.

- C. Enable AWS CloudTrail by creating a new trail and applying the trail to all region

- D. Specify a single Amazon S3 bucket as the storage location.

- E. Enable Amazon CloudWatch logging for all AWS services across all regions, and aggregate them to a single Amazon S3 bucket for later analysis.

Answer: C

NEW QUESTION 21

A company wishes to enable Single Sign On (SSO) so its employees can login to the management console using their corporate directory identity. Which steps below are required as part of the process? Select 2 answers from the options given below.

Please select:

- A. Create a Direct Connect connection between on-premise network and AW

- B. Use an AD connector for connecting AWS with on-premise active directory.

- C. Create 1AM policies that can be mapped to group memberships in the corporate directory.

- D. Create a Lambda function to assign 1AM roles to the temporary security tokens provided to the users.

- E. Create 1AM users that can be mapped to the employees' corporate identities

- F. Create an 1AM role that establishes a trust relationship between 1AM and the corporate directory identity provider (IdP)

Answer: AE

Explanation:

Create a Direct Connect connection so that corporate users can access the AWS account

Option B is incorrect because 1AM policies are not directly mapped to group memberships in the corporate directory. It is 1AM roles which are mapped.

Option C is incorrect because Lambda functions is an incorrect option to assign roles.

Option D is incorrect because 1AM users are not directly mapped to employees' corporate identities. For more information on Direct Connect, please refer to below URL:

' https://aws.amazon.com/directconnect/

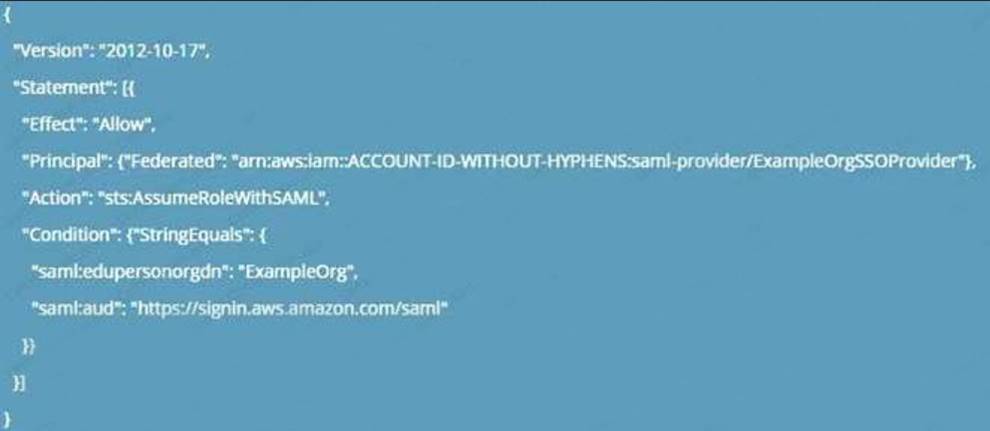

From the AWS Documentation, for federated access, you also need to ensure the right policy permissions are in place

Configure permissions in AWS for your federated users

The next step is to create an 1AM role that establishes a trust relationship between 1AM and your organization's IdP that identifies your IdP as a principal (trusted entity) for purposes of federation. The role also defines what users authenticated your organization's IdP are allowed to do in AWS. You can use the 1AM console to create this role. When you create the trust policy that indicates who can assume the role, you specify the SAML provider that you created earlier in 1AM along with one or more SAML attributes that a user must match to be allowed to assume the role. For example, you can specify that only users whose SAML

eduPersonOrgDN value is ExampleOrg are allowed to sign in. The role wizard automatically adds a condition to test the saml:aud attribute to make sure that the role is assumed only for sign-in to the AWS Management Console. The trust policy for the role might look like this:

C:UserswkDesktopmudassarUntitled.jpg

For more information on SAML federation, please refer to below URL: https://docs.aws.amazon.com/IAM/latest/UserGuide/id_roles_providers_enabli Note:

What directories can I use with AWS SSO?

You can connect AWS SSO to Microsoft Active Directory, running either on-premises or in the AWS Cloud. AWS SSO supports AWS Directory Service for Microsoft Active Directory, also known as AWS Managed Microsoft AD, and AD Connector. AWS SSO does not support Simple AD. See AWS Directory Service Getting Started to learn more.

To connect to your on-premises directory with AD Connector, you need the following: VPC

Set up a VPC with the following:

• At least two subnets. Each of the subnets must be in a different Availability Zone.

• The VPC must be connected to your on-premises network through a virtual private network (VPN) connection or AWS Direct

Connect.

• The VPC must have default hardware tenancy.

• https://aws.amazon.com/single-sign-on/

• https://aws.amazon.com/single-sign-on/faqs/

• https://aws.amazon.com/bloj using-corporate-credentials/

• https://docs.aws.amazon.com/directoryservice/latest/admin-

The correct answers are: Create a Direct Connect connection between on-premise network and AWS. Use an AD connector connecting AWS with on-premise active directory.. Create an 1AM role that establishes a trust relationship between 1AM and corporate directory identity provider (IdP)

Submit your Feedback/Queries to our Experts

NEW QUESTION 22

You are hosting a web site via website hosting on an S3 bucket - http://demo.s3-website-us-east-l .amazonaws.com. You have some web pages that use Javascript that access resources in another bucket which has web site hosting also enabled. But when users access the web pages , they are getting a blocked Javascript error. How can you rectify this?

Please select:

- A. Enable CORS for the bucket

- B. Enable versioning for the bucket

- C. Enable MFA for the bucket

- D. Enable CRR for the bucket

Answer: A

Explanation:

Your answer is incorrect Answer-A

Such a scenario is also given in the AWS Documentation Cross-Origin Resource Sharing: Use-case Scenarios The following are example scenarios for using CORS:

• Scenario

1: Suppose that you are hosting a website in an Amazon S3 bucket named website as described in Hosting a Static Website on Amazon S3. Your users load the website endpoint http://website.s3-website-us-east-1

.a mazonaws.com. Now you want to use JavaScript on the webpages that are stored in this bucket to be able to make authenticated GET and PUT requests against the same bucket by using the Amazon S3 API endpoint for the bucket website.s3.amazonaws.com. A browser would normally block JavaScript from allowing those requests, but with CORS you can configure your bucket to explicitly enable cross-origin requests from website.s3-website-us-east-1 .amazonaws.com.

• Scenario 2: Suppose that you want to host a web font from your S3 bucket. Again, browsers require a CORS check (also called a preflight check) for loading web fonts. You would configure the bucket that is hosting the web font to allow any origin to make these requests.

Option Bis invalid because versioning is only to create multiple versions of an object and can help in accidental deletion of objects

Option C is invalid because this is used as an extra measure of caution for deletion of objects Option D is invalid because this is used for Cross region replication of objects

For more information on Cross Origin Resource sharing, please visit the following URL

• ittps://docs.aws.amazon.com/AmazonS3/latest/dev/cors.html The correct answer is: Enable CORS for the bucket

Submit your Feedback/Queries to our Experts

NEW QUESTION 23

An application running on EC2 instances in a VPC must access sensitive data in the data center. The access must be encrypted in transit and have consistent low latency. Which hybrid architecture will meet these requirements?

Please select:

- A. Expose the data with a public HTTPS endpoint.

- B. A VPN between the VPC and the data center over a Direct Connect connection

- C. A VPN between the VPC and the data center.

- D. A Direct Connect connection between the VPC and data center

Answer: B

Explanation:

Since this is required over a consistency low latency connection, you should use Direct Connect. For encryption, you can make use of a VPN

Option A is invalid because exposing an HTTPS endpoint will not help all traffic to flow between a VPC and the data center.

Option C is invalid because low latency is a key requirement Option D is invalid because only Direct Connect will not suffice

For more information on the connection options please see the below Link: https://aws.amazon.com/answers/networking/aws-multiple-vpc-vpn-connection-sharint

The correct answer is: A VPN between the VPC and the data center over a Direct Connect connection Submit your Feedback/Queries to our Experts

NEW QUESTION 24

Your application currently use AWS Cognito for authenticating users. Your application consists of different types of users. Some users are only allowed read access to the application and others are given contributor access. How wou you manage the access effectively?

Please select:

- A. Create different cognito endpoints, one for the readers and the other for the contributors.

- B. Create different cognito groups, one for the readers and the other for the contributors.

- C. You need to manage this within the application itself

- D. This needs to be managed via Web security tokens

Answer: B

Explanation:

The AWS Documentation mentions the following

You can use groups to create a collection of users in a user pool, which is often done to set the permissions for those users. For example, you can create separate groups for users who are readers, contributors, and editors of your website and app.

Option A is incorrect since you need to create cognito groups and not endpoints

Options C and D are incorrect since these would be overheads when you can use AWS Cognito For more information on AWS Cognito user groups please refer to the below Link: https://docs.aws.amazon.com/coenito/latest/developersuide/cognito-user-pools-user-groups.htmll

The correct answer is: Create different cognito groups, one for the readers and the other for the contributors. Submit your Feedback/Queries to our Experts

NEW QUESTION 25

A company stores data on an Amazon EBS volume attached to an Amazon EC2 instance. The data is asynchronously replicated to an Amazon S3 bucket. Both the EBS volume and the S3 bucket are encrypted

with the same AWS KMS Customer Master Key (CMK). A former employee scheduled a deletion of that CMK before leaving the company.

The company’s Developer Operations department learns about this only after the CMK has been deleted. Which steps must be taken to address this situation?

- A. Copy the data directly from the EBS encrypted volume before the volume is detached from the EC2 instance.

- B. Recover the data from the EBS encrypted volume using an earlier version of the KMS backing key.

- C. Make a request to AWS Support to recover the S3 encrypted data.

- D. Make a request to AWS Support to restore the deleted CMK, and use it to recover the data.

Answer: A

NEW QUESTION 26

While analyzing a company's security solution, a Security Engineer wants to secure the AWS account root user.

What should the Security Engineer do to provide the highest level of security for the account?

- A. Create a new IAM user that has administrator permissions in the AWS accoun

- B. Delete the password for the AWS account root user.

- C. Create a new IAM user that has administrator permissions in the AWS accoun

- D. Modify the permissions for the existing IAM users.

- E. Replace the access key for the AWS account root use

- F. Delete the password for the AWS account root user.

- G. Create a new IAM user that has administrator permissions in the AWS accoun

- H. Enable multi-factor authentication for the AWS account root user.

Answer: D

Explanation:

If you continue to use the root user credentials, we recommend that you follow the security best practice to enable multi-factor authentication (MFA) for your account. Because your root user can perform sensitive operations in your account, adding an additional layer of authentication helps you to better secure your account. Multiple types of MFA are available.

NEW QUESTION 27

A Security Engineer is trying to determine whether the encryption keys used in an AWS service are in compliance with certain regulatory standards.

Which of the following actions should the Engineer perform to get further guidance?

- A. Read the AWS Customer Agreement.

- B. Use AWS Artifact to access AWS compliance reports.

- C. Post the question on the AWS Discussion Forums.

- D. Run AWS Config and evaluate the configuration outputs.

Answer: B

NEW QUESTION 28

A Developer’s laptop was stolen. The laptop was not encrypted, and it contained the SSH key used to access multiple Amazon EC2 instances. A Security Engineer has verified that the key has not been used, and has blocked port 22 to all EC2 instances while developing a response plan.

How can the Security Engineer further protect currently running instances?

- A. Delete the key-pair key from the EC2 console, then create a new key pair.

- B. Use the modify-instance-attribute API to change the key on any EC2 instance that is using the key.

- C. Use the EC2 RunCommand to modify the authorized_keys file on any EC2 instance that is using the key.

- D. Update the key pair in any AMI used to launch the EC2 instances, then restart the EC2 instances.

Answer: C

NEW QUESTION 29

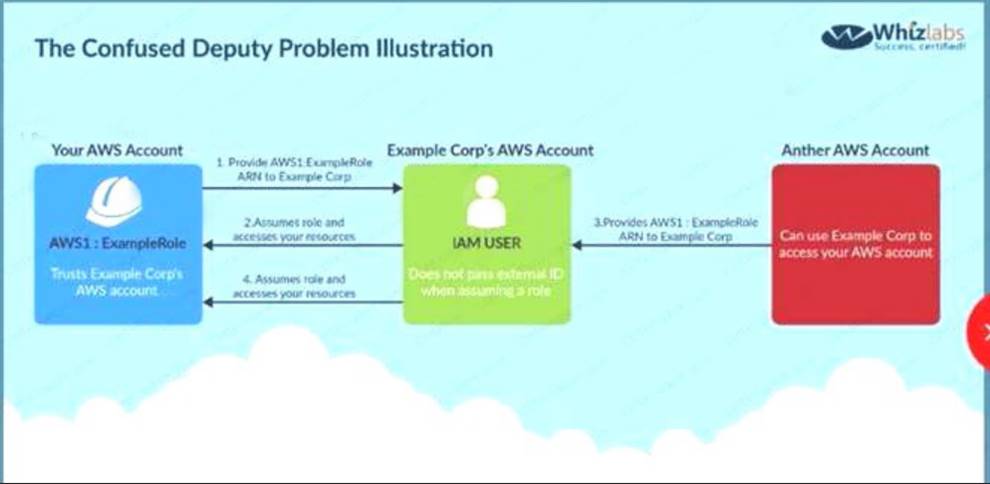

You currently have an S3 bucket hosted in an AWS Account. It holds information that needs be accessed by a partner account. Which is the MOST secure way to allow the partner account to access the S3 bucket in your account? Select 3 options.

Please select:

- A. Ensure an 1AM role is created which can be assumed by the partner account.

- B. Ensure an 1AM user is created which can be assumed by the partner account.

- C. Ensure the partner uses an external id when making the request

- D. Provide the ARN for the role to the partner account

- E. Provide the Account Id to the partner account

- F. Provide access keys for your account to the partner account

Answer: ACD

Explanation:

Option B is invalid because Roles are assumed and not 1AM users

Option E is invalid because you should not give the account ID to the partner Option F is invalid because you should not give the access keys to the partner

The below diagram from the AWS documentation showcases an example on this wherein an 1AM role and external ID is us> access an AWS account resources

C:UserswkDesktopmudassarUntitled.jpg

For more information on creating roles for external ID'S please visit the following URL:

The correct answers are: Ensure an 1AM role is created which can be assumed by the partner account. Ensure the partner uses an external id when making the request Provide the ARN for the role to the partner account

Submit your Feedback/Queries to our Experts

NEW QUESTION 30

......

Thanks for reading the newest SCS-C01 exam dumps! We recommend you to try the PREMIUM Simply pass SCS-C01 dumps in VCE and PDF here: https://www.simply-pass.com/Amazon-Web-Services-exam/SCS-C01-dumps.html (330 Q&As Dumps)