Refined Amazon-Web-Services DOP-C01 Question Online

Passleader DOP-C01 Questions are updated and all DOP-C01 answers are verified by experts. Once you have completely prepared with our DOP-C01 exam prep kits you will be ready for the real DOP-C01 exam without a problem. We have Abreast of the times Amazon-Web-Services DOP-C01 dumps study guide. PASSED DOP-C01 First attempt! Here What I Did.

Also have DOP-C01 free dumps questions for you:

NEW QUESTION 1

You are designing a system which needs, at a minimum, 8 m4.large instances operating to service traffic. When designing a system for high availability in the us-east-1 region, which has 6 Availability Zones, your company needs to be able to handle the death of a full availability zone. How should you distribute the servers, to save as much cost as possible, assuming all of the EC2 nodes are properly linked to an ELB? Your VPC account can utilize us-east-1's AZ's a through f, inclusive.

- A. 3 servers in each of AZ's a through d, inclusiv

- B. 8 servers in each of AZ's a and b.

- C. 2 servers in each of AZ's a through e, inclusive.

- D. 4 servers in each of AZ's a through conclusive.

Answer: C

Explanation:

The best way is to distribute the instances across multiple AZ's to get the best and avoid a disaster scenario. With this scenario, you will always a minimum of more than 8 servers even if one AZ were to go down. Even though A and D are also valid options, the best option when it comes to distribution is Option C. For more information on High Availability and Fault tolerance, please refer to the below link:

https://media.amazonwebservices.com/architecturecenter/AWS_ac_ra_ftha_04.pdf

NEW QUESTION 2

You are working as an AWS Devops admins for your company. You are in-charge of building the infrastructure for the company's development teams using Cloudformation. The template will include building the VPC and networking components, installing a LAMP stack and securing the created resources. As per the AWS best practices what is the best way to design this template

- A. Create a single cloudformation template to create all the resources since it would be easierfrom the maintenance perspective.

- B. Create multiple cloudformation templates based on the number of VPC's in the environment.

- C. Create multiple cloudformation templates based on the number of development groups in the environment.

- D. Create multiple cloudformation templates for each set of logical resources, one for networking, the otherfor LAMP stack creation.

Answer: D

Explanation:

Creating multiple cloudformation templates is an example of using nested stacks. The advantage of using nested stacks is given below as per the AWS documentation

As your infrastructure grows, common patterns can emerge in which you declare the same components in each of your templates. You can separate out these common components and create dedicated templates for them. That way, you can mix and match different templates but use nested stacks to create a single,

unified stack. Nested stacks are stacks that create other stacks. To create nested stacks, use the AWS::CloudFormation::Stackresource in your template to reference

other templates.

For more information on Cloudformation best practices, please refer to the below link: http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/best-practices.html

NEW QUESTION 3

Your application requires long-term storage for backups and other data that you need to keep readily available but with lower cost. Which S3 storage option should you use?

- A. AmazonS3 Standard- Infrequent Access

- B. S3Standard

- C. Glacier

- D. ReducedRedundancy Storage

Answer: A

Explanation:

The AWS Documentation mentions the following

Amazon S3 Standard - Infrequent Access (Standard - IA) is an Amazon S3 storage class for data that is accessed less frequently, but requires rapid access when needed. Standard - IA offers the high durability, throughput, and low latency of Amazon S3 Standard, with a low per GB storage price and per GB retrieval fee.

For more information on S3 Storage classes, please visit the below URL:

• https://aws.amazon.com/s3/storage-classes/

NEW QUESTION 4

You recently encountered a major bug in your web application during a deployment cycle. During this failed deployment, it took the team four hours to roll back to a previously working state, which left customers with a poor user experience. During the post-mortem, you team discussed the need to provide a quicker, more robust way to roll back failed deployments. You currently run your web application on Amazon EC2 and use Elastic Load Balancingforyour load balancing needs.

Which technique should you use to solve this problem?

- A. Createdeployable versioned bundles of your applicatio

- B. Store the bundle on AmazonS3. Re- deploy your web application on Elastic Beanstalk and enable the ElasticBeanstalk auto - rollbackfeature tied to Cloud Watch metrics that definefailure.

- C. Usean AWS OpsWorks stack to re-deploy your web application and use AWS OpsWorksDeploymentCommand to initiate a rollback during failures.

- D. Createdeployable versioned bundles of your applicatio

- E. Store the bundle on AmazonS3. Use an AWS OpsWorks stack to redeploy your web application and use AWSOpsWorks application versioningto initiate a rollback during failures.

- F. UsingElastic BeanStalk redeploy your web application and use the Elastic BeanStalkAPI to trigger a FailedDeployment API call to initiate a rollback to theprevious version.

Answer: B

Explanation:

The AWS Documentation mentions the following

AWS DeploymentCommand has a rollback option in it. Following commands are available for apps to use:

deploy: Deploy App.

Ruby on Rails apps have an optional args parameter named migrate. Set Args to {"migrate":["true"]) to migrate the database.

The default setting is {"migrate": ["false"]).

The "rollback" feature Rolls the app back to the previous version.

When we are updating an app, AWS OpsWorks stores the previous versions, maximum of upto five versions.

We can use this command to roll an app back as many as four versions. Reference Link:

• http://docs^ws.amazon.com/opsworks/latest/APIReference/API_DeploymentCommand.html

NEW QUESTION 5

Your company owns multiple AWS accounts. There is currently one development and one production account. You need to grant access to the development team to an S3 bucket in the production account. How can you achieve this?

- A. Createan 1AM user in the Production account that allows users from the Developmentaccount (the trusted account) to access the S3 bucket in the Productionaccount.

- B. When creating the role, define the Development account as a trustedentity and specify a permissions policy that allows trusted users to update theS3 bucket.

- C. Use web identity federation with a third-partyidentity provider with AWS STS to grant temporary credentials and membershipinto the production 1AM user.

- D. Createan 1AM cross account role in the Production account that allows users from theDevelopment account to access the S3 bucket in the Production account.

Answer: D

Explanation:

The AWS Documentation mentions the following on cross account roles

You can use AWS Identity and Access Management (1AM) roles and AWS Security Token Service (STS) to set up cross-account access between AWS accounts. When you assume an 1AM role in another AWS account to obtain cross-account access to services and resources in that account, AWS CloudTrail logs the cross-account activity. For more information on Cross account roles, please visit the below URL

• http://docs.aws.a mazon.com/IAM/latest/UserGuide/tutorial_cross-account-with-roles.htm I

NEW QUESTION 6

Your application uses Cloud Formation to orchestrate your application's resources. During your testing phase before the application went live, your Amazon RDS instance type was changed and caused the instance to be re-created, resulting In the loss of test data. How should you prevent this from occurring in the future?

- A. Within the AWS CloudFormation parameter with which users can select the Amazon RDS instance type, set AllowedValues to only contain the current instance type.

- B. Use an AWS CloudFormation stack policy to deny updates to the instanc

- C. Only allow UpdateStack permission to 1AM principals that are denied SetStackPolicy.

- D. In the AWS CloudFormation template, set the AWS::RDS::DBInstance's DBInstanceClass property to be read-only.

- E. Subscribe to the AWS CloudFormation notification "BeforeResourcellpdate," and call CancelStackUpdate if the resource identified is the Amazon RDS instance.

- F. Update the stack using ChangeSets

Answer: E

Explanation:

When you need to update a stack, understanding how your changes will affect running resources before you implement them can help you update stacks with confidence. Change sets allow you to preview how proposed changes to a stack might impact your running resources, for example, whether your changes will delete or replace any critical resources, AWS CloudFormation makes the changes to your stack only when you decide to execute the change set, allowing you to decide whether to proceed with your proposed changes or explore other changes by creating another change set

For example, you can use a change set to verify that AWS CloudFormation won't replace your stack's database instances during an update.

NEW QUESTION 7

You have an Autoscaling Group configured to launch EC2 Instances for your application. But you notice that the Autoscaling Group is not launching instances in the right proportion. In fact instances are being launched too fast. What can you do to mitigate this issue? Choose 2 answers from the options given below

- A. Adjust the cooldown period set for the Autoscaling Group

- B. Set a custom metric which monitors a key application functionality forthe scale-in and scale-out process.

- C. Adjust the CPU threshold set for the Autoscaling scale-in and scale-out process.

- D. Adjust the Memory threshold set forthe Autoscaling scale-in and scale-out process.

Answer: AB

Explanation:

The Auto Scaling cooldown period is a configurable setting for your Auto Scaling group that helps to ensure that Auto Scaling doesn't launch or terminate additional instances before the previous scaling activity takes effect.

For more information on the cool down period, please refer to the below link:

• http://docs^ws.a mazon.com/autoscaling/latest/userguide/Cooldown.html

Also it is better to monitor the application based on a key feature and then trigger the scale-in and scale-out feature accordingly. In the question, there is no mention of CPU or memory causing the issue.

NEW QUESTION 8

You have a development team that is continuously spending a lot of time rolling back updates for an application. They work on changes, and if the change fails, they spend more than 5-6h in rolling back the update. Which of the below options can help reduce the time for rolling back application versions.

- A. Use Elastic Beanstalk and re-deploy using Application Versions

- B. Use S3 to store each version and then re-deploy with Elastic Beanstalk

- C. Use CloudFormation and update the stack with the previous template

- D. Use OpsWorks and re-deploy using rollback feature.

Answer: A

Explanation:

Option B is invalid because Clastic Beanstalk already has the facility to manage various versions and you don't need to use S3 separately for this.

Option C is invalid because in Cloudformation you will need to maintain the versions. Clastic Beanstalk can so that automatically for you.

Option D is good for production scenarios and Clastic Beanstalk is great for development scenarios. AWS beanstalk is the perfect solution for developers to maintain application versions.

With AWS Clastic Beanstalk, you can quickly deploy and manage applications in the AWS Cloud without worrying about the infrastructure that runs those

applications. AWS Clastic Beanstalk reduces management complexity without restricting choice or control. You simply upload your application, and AWS Clastic

Beanstalk automatically handles the details of capacity provisioning, load balancing, scaling, and application health monitoring.

For more information on AWS Beanstalk please refer to the below link: https://aws.amazon.com/documentation/elastic-beanstalk/

NEW QUESTION 9

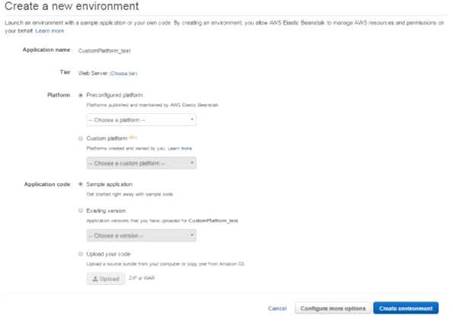

When creating an Elastic Beanstalk environment using the Wizard, what are the 3 configuration options presented to you

- A. Choosingthetypeof Environment- Web or Worker environment

- B. Choosingtheplatformtype-Nodejs,IIS,etc

- C. Choosing the type of Notification - SNS or SQS

- D. Choosing whether you want a highly available environment or not

Answer: ABD

Explanation:

The below screens are what are presented to you when creating an Elastic Beanstalk environment

The high availability preset includes a load balancer; the low cost preset does not For more information on the configuration settings, please refer to the below link: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/environments-create-wizard.html

NEW QUESTION 10

When building a multicontainer Docker platform using Elastic Beanstalk, which of the following is required

- A. DockerFile to create custom images during deployment

- B. Prebuilt Images stored in a public or private online image repository.

- C. Kurbernetes to manage the docker containers.

- D. RedHatOpensift to manage the docker containers.

Answer: B

Explanation:

This is a special note given in the AWS Documentation for Multicontainer Docker platform for Elastic Beanstalk

Building custom images during deployment with a Dockerfile is not supported by the multicontainer Docker platform on Elastic Beanstalk. Build your images and

deploy them to an online repository before creating an Elastic Beanstalk environment.

For more information on Multicontainer Docker platform for Elastic Beanstalk, please refer to the below link:

http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker_ecs.html

NEW QUESTION 11

You have a web application that is currently running on a three M3 instances in three AZs. You have an Auto Scaling group configured to scale from three to thirty instances. When reviewing your Cloud Watch metrics, you see that sometimes your Auto Scalinggroup is hosting fifteen instances. The web application is reading and writing to a DynamoDB.configured backend and configured with 800 Write Capacity Units and 800 Read Capacity Units. Your DynamoDB Primary Key is the Company ID. You are hosting 25 TB of data in your web application. You have a single customer that is complaining of long load times when their staff arrives at the office at 9:00 AM and loads the website, which consists of content that is pulled from DynamoDB. You have other customers who routinely use the web application. Choose the answer that will ensure high availability and reduce the customer's access times.

- A. Adda caching layer in front of your web application by choosing ElastiCacheMemcached instances in one of the AZs.

- B. Doublethe number of Read Capacity Units in your DynamoDB instance because theinstance isprobably being throttled when the customer accesses the website andyour web application.

- C. Changeyour Auto Scalinggroup configuration to use Amazon C3 instance types, becausethe web application layer is probably running out of compute capacity.

- D. Implementan Amazon SQS queue between your DynamoDB database layer and the webapplication layer to minimize the large burst in traffic the customergenerateswhen everyone arrives at the office at 9:00AM and begins accessing the website.

- E. Usedata pipelines to migrate your DynamoDB table to a new DynamoDB table with aprimary key that is evenly distributed across your datase

- F. Update your webappl ication to request data from the new table

Answer: E

Explanation:

The AWS documentation provide the following information on the best performance for DynamoDB tables

The optimal usage of a table's provisioned throughput depends on these factors: The primary key selection.

The workload patterns on individual items. The primary key uniquely identifies each item in a table. The primary key can be simple (partition key) or composite (partition key and sort key). When it stores data, DynamoDB divides a table's items into multiple partitions, and distributes the data primarily based upon the partition key value. Consequently, to achieve the full amount of request throughput you have provisioned for a table, keep your workload spread evenly across the partition key values. Distributing requests across partition key values distributes the requests across partitions. For more information on DynamoDB best practises please visit the link:

• http://docs.aws.a mazon.com/amazondynamodb/latest/developerguide/Guide I inesForTables.htm I

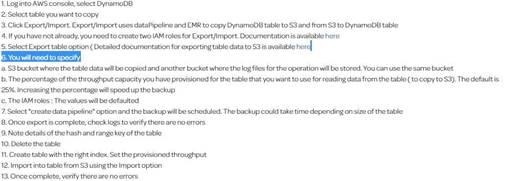

Note: One of the AWS forumns is explaining the steps for this process in detail. Based on that, while importing data from S3 using datapipeline to a new table in dynamodb we can create a new index. Please find the steps given below.

NEW QUESTION 12

Your company is getting ready to do a major public announcement of a social media site on AWS. The website is running on EC2 instances deployed across multiple Availability Zones with a Multi-AZ RDS MySQL Extra Large DB Instance. The site performs a high number of small reads and writes per second and relies on an eventual consistency model. After comprehensive tests you discover that there is read contention on RDS MySQL. Which are the best approaches to meet these requirements? Choose 2 answers from the options below

- A. Deploy ElasticCache in-memory cache running in each availability zone

- B. Implement sharding to distribute load to multiple RDS MySQL instances

- C. Increase the RDS MySQL Instance size and Implement provisioned IOPS

- D. Add an RDS MySQL read replica in each availability zone

Answer: AD

Explanation:

Implement Read Replicas and Clastic Cache

Amazon RDS Read Replicas provide enhanced performance and durability for database (DB) instances. This replication feature makes it easy to elastically scale out beyond the capacity constraints of a single DB Instance for read-heavy database workloads. You can create one or more replicas of a given source DB Instance and serve high-volume application read traffic from multiple copies of your data, thereby increasing aggregate read throughput.

For more information on Read Replica's, please visit the below link:

• https://aws.amazon.com/rds/details/read-replicas/

Amazon OastiCache is a web service that makes it easy to deploy, operate, and scale an in-memory data store or cache in the cloud. The service improves the performance of web applications by allowing you to retrieve information from fast, managed, in- memory data stores, instead of relying entirely on slower disk-based databases.

For more information on Amazon OastiCache, please visit the below link:

• https://aws.amazon.com/elasticache/

NEW QUESTION 13

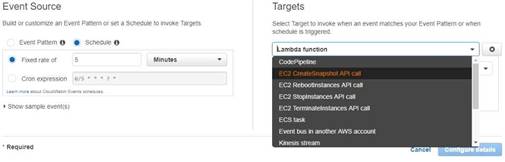

You have a requirement to automate the creation of EBS Snapshots. Which of the following can be

used to achieve this in the best way possible?

- A. Createa powershell script which uses the AWS CLI to get the volumes and then run thescript as a cron job.

- B. Usethe A WSConf ig service to create a snapshot of the AWS Volumes

- C. Usethe AWS CodeDeploy service to create a snapshot of the AWS Volumes

- D. UseCloudwatch Events to trigger the snapshots of EBS Volumes

Answer: D

Explanation:

The best is to use the inbuilt sen/ice from Cloudwatch, as Cloud watch Events to automate the creation of CBS Snapshots. With Option A, you would be restricted to

running the powrshell script on Windows machines and maintaining the script itself And then you have the overhead of having a separate instance just to run that script.

When you go to Cloudwatch events, you can use the Target as EC2 CreateSnapshot API call as shown below.

The AWS Documentation mentions

Amazon Cloud Watch Cvents delivers a near real-time stream of system events that describe changes in Amazon Web Services (AWS) resources. Using simple rules that you can quickly set up, you can match events and route them to one or more target functions or streams. Cloud Watch Cvents becomes aware of operational changes as they occur. Cloud Watch Cvents responds to these operational changes and takes corrective action as necessary, by sending messages to respond to the environment, activating functions, making changes, and capturing state information.

For more information on Cloud watch Cvents, please visit the below U RL:

• http://docs.aws.amazon.com/AmazonCloudWatch/latest/events/WhatlsCloudWatchCvents.htmI

NEW QUESTION 14

Which of the following are ways to ensure that data is secured while in transit when using the AWS Elastic load balancer. Choose 2 answers from the options given below

- A. Usea TCP front end listener for your ELB

- B. Usean SSL front end listenerforyourELB

- C. Usean HTTP front end listener for your ELB

- D. Usean HTTPS front end listener for your ELB

Answer: BD

Explanation:

The AWS documentation mentions the following

You can create a load balancer that uses the SSL/TLS protocol for encrypted connections (also known as SSL offload). This feature enables traffic encryption between your load balancer and the clients that initiate HTTPS sessions, and for connections between your load balancer and your L~C2 instances.

For more information on Elastic Load balancer and secure listeners, please refer to the below link: http://docs.aws.amazon.com/elasticloadbalancing/latest/classic/elb-https-load-balancers.html

NEW QUESTION 15

You are using Autoscaling for managing the instances in your AWS environment. You need to deploy a new version of your application. You'd prefer to use all new instances if possible, but you cannot have any downtime. You also don't want to swap any environment urls. Which of the following deployment methods would you implement

- A. Using "All at once" deployment method.

- B. Using "Blue Green" deployment method.

- C. Using"RollingUpdates"deploymentmethod.

- D. Using "Blue Green" with "All at once" deployment method.

Answer: C

Explanation:

In Rolling deployment, you can mention a new set of servers which can replace the existing set of servers. This replacement will happen in a phased out manner.

Since there is a requirement to not swap URL's, you must not use Blue Green deployments.

For more information on the differences between Rolling Updates and Blue Green deployments, please refer to the below URL:

• https://cloudnative.io/docs/blue-green-deployment/

NEW QUESTION 16

Which of the below is not a lifecycle event in Opswork?

- A. Setup

- B. Uninstall

- C. Configure

- D. Shutdown

Answer: B

Explanation:

Below are the Lifecycle events of Opsstack

1) Setup - This event occurs after a started instance has finished booting.

2) Configure - This event occurs on all of the stack's instances when one of the following occurs:

a) An instance enters or leaves the online state.

b) You associate an Clastic IP address with an instance or disassociate one from an instance.

c) You attach an Clastic Load Balancing load balancer to a layer, or detach one from a layer.

3) Deploy - This event occurs when you run a Deploy command, typically to deploy an application to a set of application server instances.

4) Undeploy - This event occurs when you delete an app or run an Undeploy command to remove an app from a set of application server instances.

5) Shutdown - This event occurs after you direct AWS Ops Works Stacks to shut an instance down but before the associated Amazon CC2 instance is actually terminated

For more information on Opswork lifecycle events, please visit the below URL:

• http://docs.aws.amazon.com/opsworks/latest/userguide/workingcookbook-events.htm I

NEW QUESTION 17

You have enabled Elastic Load Balancing HTTP health checking. After looking at the AWS Management Console, you see that all instances are passing health checks, but your customers are reporting that your site is not responding. What is the cause?

- A. The HTTP health checking system is misreportingdue to latency in inter-instance metadata synchronization.

- B. The health check in place is not sufficiently evaluating the application function.

- C. The application is returning a positive health check too quickly for the AWS Management Console to respond.D- Latency in DNS resolution is interfering with Amazon EC2 metadata retrieval.

Answer: B

Explanation:

You need to have a custom health check which will evaluate the application functionality. Its not enough using the normal health checks. If the application functionality does not work and if you don't have custom health checks, the instances will still be deemed as healthy.

If you have custom health checks, you can send the information from your health checks to Auto Scaling so that Auto Scaling can use this information. For example, if you determine that an instance is not functioning as expected, you can set the health status of the instance to Unhealthy. The next time that Auto Scaling performs a health check on the instance, it will determine that the instance is unhealthy and then launch a replacement instance

For more information on Autoscaling health checks, please refer to the below document link: from AWS

http://docs.aws.amazon.com/autoscaling/latest/userguide/healthcheck.html

NEW QUESTION 18

You are using Elastic Beanstalk for your development team. You are responsible for deploying multiple versions of your application. How can you ensure, in an ideal way, that you don't cross the application version limit in Elastic beanstalk?

- A. Createa lambda function to delete the older versions.

- B. Createa script to delete the older versions.

- C. UseAWSConfig to delete the older versions

- D. Uselifecyle policies in Elastic beanstalk

Answer: D

Explanation:

The AWS Documentation mentions

Each time you upload a new version of your application with the Clastic Beanstalk console or the CB CLI, Elastic Beanstalk creates an application version. If you don't delete versions that you no longer use, you will eventually reach the application version limit and be unable to create new versions of that application.

You can avoid hitting the limit by applying an application version lifecycle policy to your applications.

A lifecycle policy tells Clastic Beanstalk to delete application versions that are old, or to delete application versions when the total number of versions for an application exceeds a specified number.

For more information on Clastic Beanstalk lifecycle policies please see the below link:

• http://docs.aws.a mazon.com/elasticbeanstalk/latest/dg/appl ications-lifecycle.html

NEW QUESTION 19

You work for an accounting firm and need to store important financial data for clients. Initial frequent access to data is required, but after a period of 2 months, the data can be archived and brought back only in the case of an audit. What is the most cost-effective way to do this?

- A. Storeall data in a Glacier

- B. Storeall data in a private S3 bucket

- C. Uselifecycle management to store all data in Glacier

- D. Uselifecycle management to move data from S3 to Glacier

Answer: D

Explanation:

The AWS Documentation mentions the following

Lifecycle configuration enables you to specify the lifecycle management of objects in a bucket. The configuration is a set of one or more rules, where each rule defines an action for Amazon S3 to apply to a group of objects. These actions can be classified as follows:

Transition actions - In which you define when objects transition to another storage class. For example, you may choose to transition objects to the STANDARDJ A (IA, for infrequent access) storage class 30 days after creation, or archive objects to the GLACIER storage class one year after creation.

Cxpiration actions - In which you specify when the objects expire. Then Amazon S3 deletes the expired objects on your behalf. For more information on S3 Lifecycle policies, please visit the below URL:

• http://docs.aws.a mazon.com/AmazonS3/latest/dev/object-lifecycle-mgmt.htmI

NEW QUESTION 20

Your company has an e-commerce platform which is expanding all over the globe, you have EC2 instances deployed in multiple regions you want to monitor performance of all of these EC2 instances. How will you setup CloudWatch to monitor EC2 instances in multiple regions?

- A. Createseparate dashboards in every region

- B. Register!nstances running on different regions to CloudWatch

- C. Haveone single dashboard to report metrics to CloudWatch from different region

- D. Thisis not possible

Answer: C

Explanation:

You can monitor AWS resources in multiple regions using a single Cloud Watch dashboard. For example, you can create a dashboard that shows CPU utilization for an

CC2 instance located in the us-west-2 region with your billing metrics, which are located in the us- east-1 region.

For more information on Cloudwatch dashboard, please refer to the below url http://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/cross_region_dashboard.html

NEW QUESTION 21

As part of your deployment pipeline, you want to enable automated testing of your AWS CloudFormation template. What testing should be performed to enable faster feedback while minimizing costs and risk? Select three answers from the options given below

- A. Usethe AWS CloudFormation Validate Template to validate the syntax of the template

- B. Usethe AWS CloudFormation Validate Template to validate the properties ofresources defined in the template.

- C. Validatethe template's is syntax using a generalJSON parser.

- D. Validatethe AWS CloudFormation template against the official XSD scheme definitionpublished by Amazon Web Services.

- E. Updatethe stack with the templat

- F. If the template fails rollback will return thestack and its resources to exactly the same state.

- G. When creating the stack, specify an Amazon SNS topic to which your testing system is subscribe

- H. Your testing system runs tests when it receives notification that the stack is created or updated.

Answer: AEF

Explanation:

The AWS documentation mentions the following

The aws cloudformation validate-template command is designed to check only the syntax of your template. It does not ensure that the property values that you have specified for a resource are valid for that resource. Nor does it determine the number of resources that will exist when the stack is created.

To check the operational validity, you need to attempt to create the stack. There is no sandbox or test area for AWS Cloud Formation stacks, so you are charged for the resources you create during testing. Option F is needed for notification.

For more information on Cloudformation template validation, please visit the link:

http://docs.aws.a mazon.com/AWSCIoudFormation/latest/UserGuide/using-cfn-va I idate- template.htm I

NEW QUESTION 22

Which of the following is incorrect when it comes to using the instances in an Opswork stack?

- A. In a stack you can use a mix of both Windowsand Linux operating systems

- B. You can start and stop instances manually in a stack

- C. You can use custom AMI'S as long as they are based on one of the AWS OpsWorks Stacks- supported AMIs

- D. You can use time-based automatic scaling with any stack

Answer: A

Explanation:

The AWS documentation mentions the following about Opswork stack

• A stack's instances can run either Linux or Windows.

A stack can have different Linux versions or distributions on different instances, but you cannot mix Linux and Windows instances.

• You can use custom AMIs (Amazon Machine Images), but they must be based on one of the AWS Ops Works Stacks-supported AMIs

• You can start and stop instances manually or have AWS OpsWorks Stacks automatically scale the number of instances. You can use time-based automatic scaling with any stack; Linux stacks also can use load-based scaling.

• In addition to using AWS OpsWorks Stacks to create Amazon EC2 instances, you can also register instances with a Linux stack that were created outside of AWS OpsWorks Stacks.

For more information on Opswork stacks, please visit the below link: http://docs.aws.amazon.com/opsworks/latest/userguide/workinginstances-os.html

NEW QUESTION 23

......

P.S. Certleader now are offering 100% pass ensure DOP-C01 dumps! All DOP-C01 exam questions have been updated with correct answers: https://www.certleader.com/DOP-C01-dumps.html (116 New Questions)