All About Virtual DOP-C01 Training

It is more faster and easier to pass the Amazon-Web-Services DOP-C01 exam by using 100% Correct Amazon-Web-Services AWS Certified DevOps Engineer- Professional questuins and answers. Immediate access to the Up to date DOP-C01 Exam and find the same core area DOP-C01 questions with professionally verified answers, then PASS your exam with a high score now.

Amazon-Web-Services DOP-C01 Free Dumps Questions Online, Read and Test Now.

NEW QUESTION 1

The development team has developed a new feature that uses an AWS service and wants to test it from inside a staging VPC. How should you test this feature with the fastest turnaround time?

- A. Launchan Amazon Elastic Compute Cloud (EC2) instance in the staging VPC in responseto a development request, and use configuration management to set up theapplicatio

- B. Run any testing harnesses to verify application functionality andthen use Amazon Simple Notification Service (SNS) to notify the developmentteam of the results.

- C. Usean Amazon EC2 instance that frequently polls the version control system todetect the new feature, use AWS CloudFormation and Amazon EC2 user data to runany testing harnesses to verify application functionality and then use AmazonSNS to notify the development team of the results.

- D. Usean Elastic Beanstalk application that polls the version control system todetect the new feature, use AWS CloudFormation and Amazon EC2 user data to runany testing harnesses to verify application functionality and then use AmazonKinesis to notify the development team of the results.

- E. UseAWS CloudFormation to launch an Amazon EC2 instance use Amazon EC2 user data torun any testing harnesses to verify application functionality and then useAmazon Kinesis to notify the development team of the results.

Answer: A

Explanation:

Using Amazon Kinesis would just take more time in setup and would not be ideal to notify the relevant team in the shortest time possible.

Since the test needs to be conducted in the staging VPC, it is best to launch the CC2 in the staging VPC.

For more information on the Simple Notification service, please visit the link:

• https://aws.amazon.com/sns/

NEW QUESTION 2

Your company has the requirement to set up instances running as part of an Autoscaling Group. Part of the requirement is to use Lifecycle hooks to setup custom based software's and do the necessary configuration on the instances. The time required for this setup might take an hour, or might finish before the hour is up. How should you setup lifecycle hooks for the Autoscaling Group. Choose 2 ideal actions you would include as part of the lifecycle hook.

- A. Configure the lifecycle hook to record heartbeat

- B. If the hour is up, restart the timeout period.

- C. Configure the lifecycle hook to record heartbeat

- D. If the hour is up, choose to terminate the current instance and start a new one

- E. Ifthe software installation and configuration is complete, then restart the time period.

- F. If the software installation and configuration is complete, then send a signal to complete the launch of the instance.

Answer: AD

Explanation:

The AWS Documentation provides the following information on lifecycle hooks

By default, the instance remains in a wait state for one hour, and then Auto Scaling continues the launch or terminate process (Pending: Proceed or Terminating: Proceed). If you need more time, you can restart the timeout period by recording a heartbeat. If you finish before the timeout period ends, you can complete the lifecycle action, which continues the launch or termination process

For more information on AWS Lifecycle hooks, please visit the below URL:

• http://docs.aws.amazon.com/autoscaling/latest/userguide/lifecycle-hooks.html

NEW QUESTION 3

Which of the following commands for the elastic beanstalk CLI can be used to create the current application into the specified environment?

- A. ebcreate

- B. ebstart

- C. enenv

- D. enapp

Answer: A

Explanation:

Differences from Version 3 of EB CLI

CB is a command line interface (CLI) tool for Clastic Beanstalk that you can use to deploy applications quickly and more easily. The latest version of CB was introduced by Clastic Beanstalk in CB CLI 3. Although Clastic Beanstalk still supports CB 2.6 for customers who previously installed and continue to use it, you should migrate to the latest version of CB CLI 3, as it can manage environments that you launched using CB CLI 2.6 or earlier versions of CB CLI. CB CLI automatically retrieves settings from an environment created using CB if the environment is running. Note that CB CLI 3 does not store option settings locally, as in earlier versions.

CB CLI introduces the commands eb create, eb deploy, eb open, eb console, eb scale, eb setenv, eb config, eb terminate, eb clone, eb list, eb use, eb printenv, and eb ssh. In CB CLI 3.1 or later, you can also use the eb swap command. In CB CLI 3.2 only, you can use the eb abort, eb platform, and eb upgrade commands. In addition to these new commands, CB CLI 3 commands differ from CB CLI 2.6 commands in several cases:

1. eb init - Use eb init to create an .elasticbeanstalk directory in an existing project directory and create a new Clastic Beanstalk application for the project. Unlike with previous versions, CB CLI 3 and later versions do not prompt you to create an environment.

2. eb start - CB CLI 3 does not include the command eb start. Use eb create to create an environment.

3. eb stop - CB CLI 3 does not include the command eb stop. Use eb terminate to completely terminate an environment and clean up.

4. eb push and git aws.push - CB CLI 3 does not include the commands eb push or git aws.push. Use eb deploy to update your application code.

5. eb update - CB CLI 3 does not include the command eb update. Use eb config to update an environment.

6. eb branch - CB CLI 3 does not include the command eb branch.

For more information about using CB CLI 3 commands to create and manage an application, see CB CLI Command Reference. For a command reference for CB 2.6, see CB CLI 2 Commands. For a walkthrough of how to deploy a sample application using CB CLI 3, see Managing Clastic Beanstalk environments with the CB CLI. For a walkthrough of how to deploy a sample application using eb 2.6, see Getting Started with Cb. For a walkthrough of how to use CB 2.6 to map a Git branch to a specific environment, see Deploying a Git Branch to a Specific environment. https://docs.aws.amazon.com/elasticbeanstalk/latest/dg/eb-cli. html #eb-cli2-differences Note: Additionally, CB CLI 2.6 has been deprecated. It has been replaced by AWS CLI https://docs.aws.amazon.com/elasticbeanstalk/latest/dg/eb-cl i3.htm I We will replace this question soon.

NEW QUESTION 4

You have an Autoscaling Group which is launching a set of t2.small instances. You now need to replace those instances with a larger instance type. How would you go about making this change in an ideal manner?

- A. Changethe Instance type in the current launch configuration to the new instance type.

- B. Createanother Autoscaling Group and attach the new instance type.

- C. Createa new launch configuration with the new instance type and update yourAutoscaling Group.

- D. Changethe Instance type of the Underlying EC2 instance directly.

Answer: C

Explanation:

Answer - C

The AWS Documentation mentions

A launch configuration is a template that an Auto Scaling group uses to launch EC2 instances. When you create a launch configuration, you specify information for the instances such as the ID of the Amazon Machine Image (AMI), the instance type, a key pair, one or more security groups, and a block device mapping. If you've launched an EC2 instance before, you specified the same information in order to launch the instance. When you create an Auto Scalinggroup, you must specify a launch configuration. You can specify your launch configuration with multiple Auto Scaling groups.

However, you can only specify one launch configuration for an Auto Scalinggroup at a time, and you can't modify a launch configuration after you've created it.

Therefore, if you want to change the launch configuration for your Auto Scalinggroup, you must create a launch configuration and then update your Auto Scaling group with the new launch configuration.

For more information on launch configurations please see the below link:

• http://docs.aws.amazon.com/autoscaling/latest/userguide/l_au nchConfiguration.html

NEW QUESTION 5

Your company wants to understand where cost is coming from in the company's production AWS account. There are a number of applications and services running at any given time. Without expending too much initial development time.how best can you give the business a good understanding of which applications cost the most per month to operate?

- A. Create an automation script which periodically creates AWS Support tickets requesting detailed intra-month information about your bill.

- B. Use custom CloudWatch Metrics in your system, and put a metric data point whenever cost is incurred.

- C. Use AWS Cost Allocation Taggingfor all resources which support i

- D. Use the Cost Explorer to analyze costs throughout the month.

- E. Use the AWS Price API and constantly running resource inventory scripts to calculate total price based on multiplication of consumed resources over time.

Answer: C

Explanation:

A tag is a label that you or AWS assigns to an AWS resource. Each tag consists of a Areyand a value. A key can have more than one value. You can use tags to organize your resources, and cost allocation tags to track your AWS costs on a detailed level. After you activate cost allocation tags, AWS uses the cost allocation tags to organize your resource costs on your cost allocation report, to make it easier

for you to categorize and track your AWS costs. AWS provides two types of cost allocation tags, an A WS-generated tagand user-defined tags. AWS defines, creates, and applies the AWS-generated tag for you, and you define, create, and apply user-defined tags. You must activate both types of tags separately before they can appear in Cost Explorer or on a cost allocation report.

For more information on Cost Allocation tags, please visit the below URL: http://docs.aws.amazon.com/awsaccountbilling/latest/aboutv2/cost-alloctags.html

NEW QUESTION 6

You have an application which consists of EC2 instances in an Auto Scaling group. Between a particular time frame every day, there is an increase in traffic to your website. Hence users are complaining of a poor response time on the application. You have configured your Auto Scaling group to deploy one new EC2 instance when CPU utilization is greater than 60% for 2 consecutive periods of 5 minutes. What is the least cost-effective way to resolve this problem?

- A. Decrease the consecutive number of collection periods

- B. Increase the minimum number of instances in the Auto Scaling group

- C. Decrease the collection period to ten minutes

- D. Decrease the threshold CPU utilization percentage at which to deploy a new instance

Answer: B

Explanation:

If you increase the minimum number of instances, then they will be running even though the load is not high on the website. Hence you are incurring cost even though there is no need.

All of the remaining options are possible options which can be used to increase the number of instances on a high load.

For more information on On-demand scaling, please refer to the below link: http://docs.aws.amazon.com/autoscaling/latest/userguide/as-scale-based-on-demand.html

Note: The tricky part where the question is asking for 'least cost effective way". You got the design consideration correctly but need to be careful on how the question is phrased.

NEW QUESTION 7

You have the following application to be setup in AWS

1) A web tier hosted on EC2 Instances

2) Session data to be written to DynamoDB

3) Log files to be written to Microsoft SQL Server

How can you allow an application to write data to a DynamoDB table?

- A. Add an 1AM user to a running EC2 instance.

- B. Add an 1AM user that allows write access to the DynamoDB table.

- C. Create an 1AM role that allows read access to the DynamoDB table.

- D. Create an 1AM role that allows write access to the DynamoDB table.

Answer: D

Explanation:

I AM roles are designed so that your applications can securely make API requests from your instances, without requiring you to manage the security credentials that

the applications use. Instead of creating and distributing your AWS credentials For more information on 1AM Roles please refer to the below link:

http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/iam-roles-for-amazon-ec2.html

NEW QUESTION 8

Your current log analysis application takes more than four hours to generate a report of the top 10 users of your web application. You have been asked to implement a system that can report this information in real time, ensure that the report is always up to date, and handle increases in the number of requests to your web application. Choose the option that is cost-effective and can fulfill the

requirements.

- A. Publishyour data to CloudWatch Logs, and configure your application to autoscale tohandle the load on demand.

- B. Publishyour log data to an Amazon S3 bucke

- C. Use AWS CloudFormation to create an AutoScalinggroup to scale your post-processing application which is configured topull down your log files stored in Amazon S3.

- D. Postyour log data to an Amazon Kinesis data stream, and subscribe yourlog-processing application so that is configured to process your logging data.

- E. Configurean Auto Scalinggroup to increase the size of your Amazon EMR cluster

Answer: C

Explanation:

The AWS Documentation mentions the below

Amazon Kinesis makes it easy to collect, process, and analyze real-time, streaming data so you can get timely insights and react quickly to new information. Amazon

Kinesis offers key capabilities to cost effectively process streaming data at any scale, along with the flexibility to choose the tools that best suit the requirements of

your application. With Amazon Kinesis, you can ingest real-time data such as application logs, website clickstreams, loT telemetry data, and more into your

databases, data lakes and data warehouses, or build your own real-time applications using this data.

Amazon Kinesis enables you to process and analyze data as it

arrives and respond in real-time instead of having to wait until all your data is collected before the processing can begin.

For more information on AWS Kinesis please see the below link:

• https://aws.amazon.com/kinesis/

NEW QUESTION 9

When an Auto Scaling group is running in Amazon Elastic Compute Cloud (EC2), your application rapidly scales up and down in response to load within a 10-minute window; however, after the load peaks, you begin to see problems in your configuration management system where previously terminated Amazon EC2 resources are still showing as active. What would be a reliable and efficient way to handle the cleanup of Amazon EC2 resources within your configuration management system? Choose two answers from the options given below

- A. Write a script that is run by a daily cron job on an Amazon EC2 instance and that executes API Describe calls of the EC2 Auto Scalinggroup and removes terminated instances from the configuration management system.

- B. Configure an Amazon Simple Queue Service (SQS) queue for Auto Scaling actions that has a script that listens for new messages and removes terminated instances from the configuration management system.

- C. Use your existing configuration management system to control the launchingand bootstrapping of instances to reduce the number of moving parts in the automation.

- D. Write a small script that is run during Amazon EC2 instance shutdown to de-register the resource from the configuration management system.

Answer: AD

Explanation:

There is a rich brand of CLI commands available for Cc2 Instances. The CLI is located in the following link:

• http://docs.aws.a mazon.com/cli/latest/reference/ec2/

You can then use the describe instances command to describe the EC2 instances.

If you specify one or more instance I Ds, Amazon CC2 returns information for those instances. If you do not specify instance IDs, Amazon EC2 returns information for all relevant instances. If you specify an instance ID that is not valid, an error is returned. If you specify an instance that you do not own, it is not included in the returned results.

• http://docs.aws.a mazon.com/cli/latest/reference/ec2/describe-insta nces.html

You can use the CC2 instances to get those instances which need to be removed from the configuration management system.

NEW QUESTION 10

Your finance supervisor has set a budget of 2000 USD for the resources in AWS. Which of the

following is the simplest way to ensure that you know when this threshold is being reached.

- A. Use Cloudwatch events to notify you when you reach the threshold value

- B. Use the Cloudwatch billing alarm to to notify you when you reach the threshold value

- C. Use Cloudwatch logs to notify you when you reach the threshold value

- D. Use SQS queues to notify you when you reach the threshold value

Answer: B

Explanation:

The AWS documentation mentions

You can monitor your AWS costs by using Cloud Watch. With Cloud Watch, you can create billing alerts that notify you when your usage of your services exceeds

thresholds that you define. You specify these threshold amounts when you create the billing alerts.

When your usage exceeds these amounts, AWS sends you an

email notification. You can also sign up to receive notifications when AWS prices change. For more information on billing alarms, please refer to the below URL:

• http://docs.aws.amazon.com/awsaccountbilling/latest/aboutv2/mon itor-charges.html

NEW QUESTION 11

Your company needs to automate 3 layers of a large cloud deployment. You want to be able to track this deployment's evolution as it changes over time, and carefully control any alterations. What is a good way to automate a stack to meet these requirements?

- A. Use OpsWorks Stacks with three layers to model the layering in your stack.

- B. Use CloudFormation Nested Stack Templates, with three child stacks to represent the three logical layers of your cloud.

- C. Use AWS Config to declare a configuration set that AWS should roll out to your cloud.

- D. Use Elastic Beanstalk Linked Applications, passing the important DNS entires between layers using the metadata interface.

Answer: B

Explanation:

As your infrastructure grows, common patterns can emerge in which you declare the same components in each of your templates. You can separate out these common components and create dedicated templates for them. That way, you can mix and match different templates but use nested stacks to create a single,

unified stack. Nested stacks are stacks that create other stacks. To create nested stacks, use the AWS:: Cloud Form ation::Stackresource in your template to reference other templates.

For more information on nested stacks, please visit the below URL:

• http://docs^ws.amazon.com/AWSCIoudFormation/latest/UserGuide/best-practices.html#nested Note:

The query is, how you can automate a stack over the period of time, when changes are required, with out recreating the stack.

The function of Nested Stacks are to reuse Common Template Patterns.

For example, assume that you have a load balancer configuration that you use for most of your stacks. Instead of copying and pasting the same configurations into your templates, you can create a dedicated template for the load balancer. Then, you just use the resource to reference that template from within other templates.

Yet another example is if you have a launch configuration with certain specific configuration and you need to change the instance size only in the production environment and to leave it as it is in the development environment.

AWS also recommends that updates to nested stacks are run from the parent stack.

When you apply template changes to update a top-level stack, AWS CloudFormation updates the top-level stack and initiates an update to its nested stacks. AWS

Cloud Formation updates the resources of modified nested stacks, but does not update the resources of unmodified nested stacks.

NEW QUESTION 12

You have an Auto Scaling group with an Elastic Load Balancer. You decide to suspend the Auto Scaling AddToLoadBalancer for a short period of time. What will happen to the instances launched during the suspension period?

- A. The instances will be registered with ELB once the process has resumed

- B. Auto Scaling will not launch the instances during this period because of the suspension

- C. The instances will not be registered with EL

- D. You must manually register when the process is resumed */

- E. It is not possible to suspend the AddToLoadBalancer process

Answer: C

Explanation:

If you suspend AddTo Load Balancer, Auto Scaling launches the instances but does not add them to the load balancer or target group. If you resume

the AddTo Load Balancer process. Auto Scaling resumes adding instances to the load balancer or target group when they are launched. However, Auto Scaling does

not add the instances that were launched while this process was suspended. You must register those instances manually.

For more information on the Suspension and Resumption process, please visit the below U RL: http://docs.aws.amazon.com/autoscaling/latest/userguide/as-suspend-resume-processes.html

NEW QUESTION 13

You have a current Clouformation template defines in AWS. You need to change the current alarm threshold defined in the Cloudwatch alarm. How can you achieve this?

- A. Currently, there is no option to change what is already defined in Cloudformation templates.

- B. Update the template and then update the stack with the new templat

- C. Automatically all resources will be changed in the stack.

- D. Update the template and then update the stack with the new templat

- E. Only those resources that need to be changed will be change

- F. All other resources which do not need to be changed will remain as they are.

- G. Delete the current cloudformation templat

- H. Create a new one which will update the current resources.

Answer: C

Explanation:

Option A is incorrect because Cloudformation templates have the option to update resources.

Option B is incorrect because only those resources that need to be changed as part of the stack update are actually updated.

Option D is incorrect because deleting the stack is not the ideal option when you already have a change option available.

When you need to make changes to a stack's settings or change its resources, you update the stack instead of deleting it and creating a new stack. For example, if you

have a stack with an EC2 instance, you can update the stack to change the instance's AMI ID.

When you update a stack, you submit changes, such as new input parameter values or an updated template. AWS CloudFormation compares the changes you submit with the current state of your stack and updates only the changed resources

For more information on stack updates please refer to the below link:

• http://docs.aws.a mazon.com/AWSCIoudFormation/latest/UserGuide/using-cfn-updating- stacks.htmI

NEW QUESTION 14

Which of the following are advantages of using AWS CodeCommit over hosting your own source code repository system?

- A. Reduction in hardware maintenance costs

- B. Reduction in fees paid over licensing

- C. No specific restriction on files andbranches

- D. All of the above

Answer: D

Explanation:

The AWS Documentation mentions the following on CodeCommit

Self-hosted version control systems have many potential drawbacks, including: Expensive per-developer licensing fees.

High hardware maintenance costs. High support staffing costs.

Limits on the amount and types of files that can be stored and managed.

Limits on the number of branches, the amount of version history, and other related metadata that can be stored. For more information on CodeCommit please refer to the below link

• http://docs.aws.amazon.com/codecommit/latest/userguide/wel come.html

NEW QUESTION 15

Which of the following environment types are available in the Elastic Beanstalk environment. Choose 2 answers from the options given below

- A. Single Instance

- B. Multi-Instance

- C. Load Balancing Autoscaling

- D. SQS, Autoscaling

Answer: AC

Explanation:

The AWS Documentation mentions

In Elastic Beanstalk, you can create a load-balancing, autoscaling environment or a single-instance environment. The type of environment that you require depends

on the application that you deploy.

When you go onto the Configuration for your environment, you will be able to see the Environment type from there

NEW QUESTION 16

When storing sensitive data on the cloud which of the below options should be carried out on AWS. Choose 3 answers from the options given below.

- A. WithAWS you do not need to worry about encryption

- B. EnableEBS Encryption

- C. Encryptthe file system on an EBS volume using Linux tools

- D. EnableS3 Encryption

Answer: BCD

Explanation:

Amazon CBS encryption offers you a simple encryption solution for your CBS volumes without the need for you to build, maintain, and secure your own key management infrastructure. When you create an encrypted CBS volume and attach it to a supported instance type, the following types of data are encrypted:

Data at rest inside the volume

All data moving between the volume and the instance

All snapshots created from the volume For more information on CBS encryption, please refer to the below link:

• http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/CBSCncryption.htrril

Data protection refers to protecting data while in-transit (as it travels to and from Amazon S3) and at rest (while it is stored on disks in Amazon S3 data centers). You can protect data in transit by using SSL or by using client-side encryption. For more information on S3 encryption, please refer to the below link:

• http://docs-aws.amazon.com/AmazonS3/latest/dev/UsingCncryption.html

NEW QUESTION 17

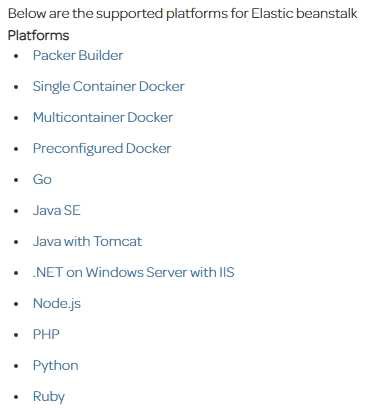

Which of the following is not a supported platform for the Elastic beanstalk service

- A. Java

- B. AngularJS

- C. PHP

- D. .Net

Answer: B

Explanation:

For more information on Elastic beanstalk, please visit the below URL:

http://docs.aws.a mazon.com/elasticbeanstalk/latest/dg/concepts.platforms. htm I

NEW QUESTION 18

You want to pass queue messages that are 1GB each. How should you achieve this?

- A. Use Kinesis as a buffer stream for message bodie

- B. Store the checkpoint id for the placement in the Kinesis Stream in SQS.

- C. Use the Amazon SQS Extended Client Library for Java and Amazon S3 as a storage mechanism for message bodies.

- D. Use SQS's support for message partitioning and multi-part uploads on Amazon S3.

- E. Use AWS EFS as a shared pool storage mediu

- F. Store filesystem pointers to the files on disk in the SQS message bodies.

Answer: B

Explanation:

You can manage Amazon SQS messages with Amazon S3. This is especially useful for storing and consuming messages with a message size of up to 2 GB. To manage

Amazon SQS messages with Amazon S3, use the Amazon SQS Extended Client Library for Java. Specifically, you use this library to:

• Specify whether messages are always stored in Amazon S3 or only when a message's size exceeds 256 KB.

• Send a message that references a single message object stored in an Amazon S3 bucket.

• Get the corresponding message object from an Amazon S3 bucket.

• Delete the corresponding message object from an Amazon S3 bucket.

For more information on processing large messages for SQS, please visit the below URL: http://docs.aws.amazon.com/AWSSimpleQueueService/latest/SQSDeveloperGuide/sqs-s3- messages. html

NEW QUESTION 19

You are creating a new API for video game scores. Reads are 100 times more common than writes, and the top 1% of scores are read 100 times more frequently than the rest of the scores. What's the best design for this system, using DynamoDB?

- A. DynamoDB table with 100x higher read than write throughput, with CloudFront caching.

- B. DynamoDB table with roughly equal read and write throughput, with CloudFront caching.

- C. DynamoDB table with 100x higher read than write throughput, with ElastiCache caching.

- D. DynamoDB table with roughly equal read and write throughput, with ElastiCache caching.

Answer: D

Explanation:

Because the lOOx read ratio is mostly driven by a small subset, with caching, only a roughly equal number of reads to writes will miss the cache, since the supermajority will hit the top 1% scores. Knowing we need to set the values roughly equal when using caching, we select AWS OastiCache, because CloudFront

cannot directly cache DynamoDB queries, and OastiCache is an excellent in-memory cache for database queries, rather than a distributed proxy cache for content delivery.

For more information on DynamoDB table gudelines please refer to the below link:

• http://docs.aws.amazon.com/amazondynamodb/latest/developerguide/GuidelinesForTables.html

NEW QUESTION 20

Which of the following Deployment types are available in the CodeDeploy service. Choose 2 answers from the options given below

- A. In-place deployment

- B. Rolling deployment

- C. Immutable deployment

- D. Blue/green deployment

Answer: AD

Explanation:

The following deployment types are available

1. In-place deployment: The application on each instance in the deployment group is stopped, the latest application revision is installed, and the new version of the application is started and validated.

2. Blue/green deployment: The instances in a deployment group (the original environment) are replaced by a different set of instances (the replacement environment)

For more information on Code Deploy please refer to the below link:

• http://docs.aws.amazon.com/codedeploy/latest/userguide/primary-components.html

NEW QUESTION 21

You have an application hosted in AWS. You wanted to ensure that when certain thresholds are reached, a Devops Engineer is notified. Choose 3 answers from the options given below

- A. Use CloudWatch Logs agent to send log data from the app to CloudWatch Logs from Amazon EC2 instances

- B. Pipe data from EC2 to the application logs using AWS Data Pipeline and CloudWatch

- C. Once a CloudWatch alarm is triggered, use SNS to notify the Senior DevOps Engineer.

- D. Set the threshold your application can tolerate in a CloudWatch Logs group and link a CloudWatch alarm on that threshold.

Answer: ACD

Explanation:

You can use Cloud Watch Logs to monitor applications and systems using log data. For example,

CloudWatch Logs can track the number of errors that occur in your

application logs and send you a notification whenever the rate of errors exceeds a threshold you specify. CloudWatch Logs uses your log data for monitoring; so, no code changes are required. For example, you can monitor application logs for specific literal terms (such as "NullReferenceLxception") or count the number of occurrences of a literal term at a particular position in log data (such as "404" status codes in an Apache access log). When the term you are searching for is found, CloudWatch Logs reports the data to a CloudWatch metric that you specify. For more information on Cloudwatch Logs please refer to the below link:

http://docs.ws.amazon.com/AmazonCloudWatch/latest/logs/WhatlsCloudWatchLogs.html

Amazon CloudWatch uses Amazon SNS to send email. First, create and subscribe to an SNS topic.

When you create a CloudWatch alarm, you can add this SNS topic to send an email notification when the alarm changes state.

For more information on Cloudwatch and SNS please refer to the below link: http://docs.aws.amazon.com/AmazonCloudWatch/latest/monitoring/US_SetupSNS.html

NEW QUESTION 22

There is a requirement to monitor API calls against your AWS account by different users and entities. There needs to be a history of those calls. The history of those calls are needed in in bulk for later review. Which 2 services can be used in this scenario

- A. AWS Config; AWS Inspector

- B. AWS CloudTrail; AWS Config

- C. AWS CloudTrail; CloudWatch Events

- D. AWS Config; AWS Lambda

Answer: C

Explanation:

You can use AWS CloudTrail to get a history of AWS API calls and related events for your account. This history includes calls made with the AWS Management

Console, AWS Command Line Interface, AWS SDKs, and other AWS services. For more information on Cloudtrail, please visit the below URL:

• http://docs.aws.a mazon.com/awscloudtrail/latest/userguide/cloudtrai l-user-guide.html

Amazon Cloud Watch Cvents delivers a near real-time stream of system events that describe changes in Amazon Web Services (AWS) resources. Using simple rules that you can quickly set up, you can match events and route them to one or more target functions or streams. Cloud Watch Cvents becomes aware of operational changes as they occur. Cloud Watch Cvents responds to these operational changes and takes corrective action as necessary, by sending messages to respond to the environment, activating functions, making changes, and capturing state information. For more information on Cloud watch events, please visit the below U RL:

• http://docs.aws.a mazon.com/AmazonCloudWatch/latest/events/Whatl sCloudWatchCvents.html

NEW QUESTION 23

......

P.S. Easily pass DOP-C01 Exam with 116 Q&As 2passeasy Dumps & pdf Version, Welcome to Download the Newest 2passeasy DOP-C01 Dumps: https://www.2passeasy.com/dumps/DOP-C01/ (116 New Questions)