Top Tips Of Improved DOP-C01 Testing Engine

We provide real DOP-C01 exam questions and answers braindumps in two formats. Download PDF & Practice Tests. Pass Amazon-Web-Services DOP-C01 Exam quickly & easily. The DOP-C01 PDF type is available for reading and printing. You can print more and practice many times. With the help of our Amazon-Web-Services DOP-C01 dumps pdf and vce product and material, you can easily pass the DOP-C01 exam.

Free demo questions for Amazon-Web-Services DOP-C01 Exam Dumps Below:

NEW QUESTION 1

You are creating an application which stores extremely sensitive financial information. All information in the system must be encrypted at rest and in transit. Which of these is a violation of this policy?

- A. ELB SSL termination.

- B. ELB Using Proxy Protocol v1.

- C. CloudFront Viewer Protocol Policy set to HTTPS redirection.

- D. Telling S3 to use AES256 on the server-side.

Answer: A

Explanation:

If you use SSL termination, your servers will always get non-secure connections and will never know whether users used a more secure channel or not. If you are using Elastic beanstalk to configure the ELB, you can use the below article to ensure end to end encryption. http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/configuring-https-endtoend.html

NEW QUESTION 2

When deploying applications to Elastic Beanstalk, which of the following statements is false with regards to application deployment

- A. Theapplication can be bundled in a zip file

- B. Caninclude parent directories

- C. Shouldnot exceed 512 MB in size

- D. Canbe a war file which can be deployed to the application server

Answer: B

Explanation:

The AWS Documentation mentions

When you use the AWS Clastic Beanstalk console to deploy a new application or an application version, you'll need to upload a source bundle. Your source bundle must meet the following requirements:

Consist of a single ZIP file or WAR file (you can include multiple WAR files inside your ZIP file) Not exceed 512 MB

Not include a parent folder or top-level directory (subdirectories are fine)

For more information on deploying applications to Clastic Beanstalk please see the below link: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/applications-sourcebundle.html

NEW QUESTION 3

Your company is getting ready to do a major public announcement of a social media site on AWS. The website is running on EC2 instances deployed across multiple Availability Zones with a Multi-AZ RDS MySQL Extra Large DB Instance. The site performs a high number of small reads and writes per second and relies on an eventual consistency model. After comprehensive tests you discover that there is read contention on RDS MySQL. Which are the best approaches to meet these requirements? Choose 2 answers from the options below

- A. DeployElasticCache in-memory cache running in each availability zone

- B. Implementshardingto distribute load to multiple RDS MySQL instances

- C. Increasethe RDS MySQL Instance size and Implement provisioned IOPS

- D. Addan RDS MySQL read replica in each availability zone

Answer: AD

Explanation:

Implement Read Replicas and Elastic Cache

Amazon RDS Read Replicas provide enhanced performance and durability for database (DB) instances. This replication feature makes it easy to elastically scale out beyond the capacity constraints of a single DB Instance for read-heavy database workloads. You can create one or more replicas of a given source DB Instance and serve high-volume application read traffic from multiple copies of your data, thereby increasing aggregate read throughput.

For more information on Read Replica's, please visit the below link

• https://aws.amazon.com/rds/details/read-replicas/

Amazon OastiCache is a web service that makes it easy to deploy, operate, and scale an in-memory data store or cache in the cloud. The service improves the performance of web applications by allowing you to retrieve information from fast, managed, in-memory data stores, instead of relying entirely on slower disk-based databases.

For more information on Amazon OastiCache, please visit the below link

• https://aws.amazon.com/elasticache/

NEW QUESTION 4

Which of the below 3 things can you achieve with the Cloudwatch logs service? Choose 3 options.

- A. RecordAPI calls for your AWS account and delivers log files containing API calls toyour Amazon S3 bucket

- B. Sendthe log data to AWS Lambda for custom processing or to load into other systems

- C. Streamthe log data to Amazon Kinesis

- D. Streamthe log data into Amazon Elasticsearch in near real-time with Cloud Watch Logssubscriptions.

Answer: BCD

Explanation:

You can use Amazon CloudWatch Logs to monitor, store, and access your log files from Amazon Elastic Compute Cloud (Amazon L~C2) instances, AWS CloudTrail, and other sources. You can then retrieve the associated log data from CloudWatch Logs.

For more information on Cloudwatch logs, please visit the below URL http://docs.ws.amazon.com/AmazonCloudWatch/latest/logs/WhatlsCloudWatchLogs.html

NEW QUESTION 5

Which of the following services can be used in conjunction with Cloudwatch Logs. Choose the 3 most viable services from the options given below

- A. Amazon Kinesis

- B. Amazon S3

- C. Amazon SQS

- D. Amazon Lambda

Answer: ABD

Explanation:

The AWS Documentation the following products which can be integrated with Cloudwatch logs

1) Amazon Kinesis - Here data can be fed for real time analysis

2) Amazon S3 - You can use CloudWatch Logs to store your log data in highly durable storage such as S3.

3) Amazon Lambda - Lambda functions can be designed to work with Cloudwatch log For more information on Cloudwatch Logs, please refer to the below link: link:http://docs^ws.amazon.com/AmazonCloudWatch/latest/logs/WhatlsCloudWatchLogs.html

NEW QUESTION 6

You have created a DynamoDB table for an application that needs to support thousands of users. You need to ensure that each user can only access their own data in a particular table. Many users already have accounts with a third-party identity provider, such as Facebook, Google, or Login with Amazon. How would you implement this requirement?

Choose 2 answers from the options given below.

- A. Createan 1AM User for all users so that they can access the application.

- B. UseWeb identity federation and register your application with a third-partyidentity provider such as Google, Amazon, or Facebook.

- C. Createan 1AM role which has specific access to the DynamoDB table.

- D. Usea third-party identity provider such as Google, Facebook or Amazon so users canbecome an AWS1AM User with access to the application.

Answer: BC

Explanation:

The AWS Documentation mentions the following

With web identity federation, you don't need to create custom sign-in code or manage your own user identities. Instead, users of your app can sign in using a well-known identity provider (IdP) — such as Login with Amazon, Facebook, Google, or any other OpenID Connect (OIDC)-compatible IdP, receive an authentication token, and then exchange that token for temporary security credentials in AWS that map to an 1AM role with permissions to use the resources in your AWS account. Using an IdP helps you keep your AWS account secure, because you don't have to embed and distribute long- term security credentials with your application. For more information on Web Identity federation, please visit the below url http://docs.ws.amazon.com/IAM/latest/UserGuide/id_roles_providers_oidc.html

NEW QUESTION 7

You are deciding on a deployment mechanism for your application. Which of the following deployment mechanisms provides the fastest rollback after failure.

- A. Rolling-Immutable

- B. Canary

- C. Rolling-Mutable

- D. Blue/Green

Answer: D

Explanation:

In Blue Green Deployments, you will always have the previous version of your application available.

So anytime there is an issue with a new deployment, you can just quickly switch back to the older version of your application.

For more information on Blue Green Deployments, please refer to the below link: https://docs.cloudfoundry.org/devguide/deploy-apps/blue-green.html

NEW QUESTION 8

Your application has an Auto Scaling group of three EC2 instances behind an Elastic Load Balancer. Your Auto Scalinggroup was updated with a new launch configuration that refers to an updated AMI. During the deployment, customers complained that they were receiving several errors even though all instances passed the ELB health checks. How can you prevent this from happening again?

- A. Createa new ELB and attach the Autoscaling Group to the ELB

- B. Createa new launch configuration with the updated AMI and associate it with the AutoScaling grou

- C. Increase the size of the group to six and when instances becomehealthy revert to three.

- D. Manuallyterminate the instances with the older launch configuration.

- E. Updatethe launch configuration instead of updating the Autoscaling Group

Answer: B

Explanation:

An Auto Scaling group is associated with one launch configuration at a time, and you can't modify a launch configuration after you've created it. To change the launch configuration for an Auto Scaling group, you can use an existing launch configuration as the basis for a new launch configuration and then update the Auto Scaling group to use the new launch configuration.

After you change the launch configuration for an Auto Scaling group, any new instances are launched using the new configuration options, but existing instances are not affected.

Then to ensure the new instances are launches, change the size of the Autoscaling Group to 6 and once the new instances are launched, change it back to 3.

For more information on instances scale-in process and Auto Scaling Group's termination policies please view the following link:

• https://docs^ws.amazon.com/autoscaling/ec2/userguide/as-instance-termination.html#default- termination-policy For more information on changing the launch configuration please see the below link:

• http://docs.aws.amazon.com/autoscaling/latest/userguide/change-launch-config.html

NEW QUESTION 9

For AWS Auto Scaling, what is the first transition state an instance enters after leaving steady state when scaling in due to health check failure or decreased load?

- A. Terminating

- B. Detaching

- C. Terminating:Wait

- D. EnteringStandby

Answer: A

Explanation:

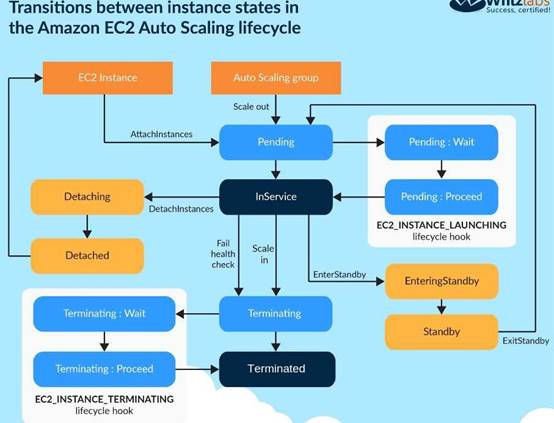

The below diagram shows the Lifecycle policy. When the scale-in happens, the first action is the Terminating action.

For more information on Autoscaling Lifecycle, please refer to the below link: http://docs.aws.amazon.com/autoscaling/latest/userguide/AutoScaingGroupLifecycle.html

NEW QUESTION 10

Which of the following service can be used to provision ECS Cluster containing following components in an automated way:

1) Application Load Balancer for distributing traffic among various task instances running in EC2 Instances

2) Single task instance on each EC2 running as part of auto scaling group

3) Ability to support various types of deployment strategies

- A. SAM

- B. Opswork

- C. Elastic beanstalk

- D. CodeCommit

Answer: C

Explanation:

You can create docker environments that support multiple containers per Amazon CC2 instance with multi-container Docker platform for Elastic Beanstalk-Elastic Beanstalk uses Amazon Elastic Container Service (Amazon CCS) to coordinate container deployments to multi-container Docker environments. Amazon CCS provides tools to manage a cluster of instances running Docker containers. Elastic Beanstalk takes care of Amazon CCS tasks including cluster creation, task definition, and execution Please refer to the below AWS documentation: https://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker_ecs.html

NEW QUESTION 11

Which Auto Scaling process would be helpful when testing new instances before sending traffic to them, while still keeping them in your Auto Scaling Group?

- A. Suspend the process AZ Rebalance

- B. Suspend the process Health Check

- C. Suspend the process Replace Unhealthy

- D. Suspend the process AddToLoadBalancer

Answer: D

Explanation:

If you suspend Ad dTo Load Balancer, Auto Scaling launches the instances but does not add them to the load balancer or target group. If you resume

the AddTo Load Balancer process. Auto Scaling resumes adding instances to the load balancer or target group when they are launched. However, Auto Scaling does

not add the instances that were launched while this process was suspended. You must register those

instances manually.

Option A is invalid because this just balances the number of CC2 instances in the group across the Availability Zones in the region

Option B is invalid because this just checks the health of the instances. Auto Scaling marks an instance as unhealthy if Amazon CC2 or Clastic Load Balancing tells

Auto Scaling that the instance is unhealthy.

Option C is invalid because this process just terminates instances that are marked as unhealthy and later creates new instances to replace them.

For more information on process suspension, please refer to the below document link: from AWS http://docs.aws.amazon.com/autoscaling/latest/userguide/as-suspend-resume-processes.html

NEW QUESTION 12

You have implemented a system to automate deployments of your configuration and application dynamically after an Amazon EC2 instance in an Auto Scaling group is launched. Your system uses a configuration management tool that works in a standalone configuration, where there is no master node. Due to the volatility of application load, new instances must be brought into service within three minutes of the launch of the instance operating system. The deployment stages take the following times to complete:

1) Installing configuration management agent: 2mins

2) Configuring instance using artifacts: 4mins

3) Installing application framework: 15mins

4) Deploying application code: 1min

What process should you use to automate the deployment using this type of standalone agent configuration?

- A. Configureyour Auto Scaling launch configuration with an Amazon EC2 UserData script toinstall the agent, pull configuration artifacts and application code from anAmazon S3 bucket, and then execute the agent to configure the infrastructureand application.

- B. Builda custom Amazon Machine Image that includes all components pre-installed,including an agent, configuration artifacts, application frameworks, and code.Create a startup script that executes the agent to configure the system onstartu

- C. *t

- D. Builda custom Amazon Machine Image that includes the configuration management agentand application framework pre-installed.Configure your Auto Scaling launchconfiguration with an Amazon EC2 UserData script to pull configurationartifacts and application code from an Amazon S3 bucket, and then execute theagent toconfigure the system.

- E. Createa web service that polls the Amazon EC2 API to check for new instances that arelaunched in an Auto Scaling grou

- F. When it recognizes a new instance, execute aremote script via SSH to install the agent, SCP the configuration artifacts andapplication code, and finally execute the agent to configure the system

Answer: B

Explanation:

Since the new instances need to be brought up in 3 minutes, hence the best option is to pre-bake all the components into an AMI. If you try to user the User Data option, it will just take time, based on the time mentioned in the question to install and configure the various components.

For more information on AMI design please see the below link:

• https://aws.amazon.com/answers/configuration-management/aws-ami-design/

NEW QUESTION 13

You have a set of EC2 Instances hosting an nginx server and a web application that is used by a set of users in your organization. After a recent application version upgrade, the instance runs into technical issues and needs an immediate restart. This does not give you enough time to inspect the cause of the issue on the server. Which of the following options if implemented prior to the incident would have assisted in detecting the underlying cause of the issue?

- A. Enabledetailed monitoring and check the Cloudwatch metrics to see the cause of theissue.

- B. Createa snapshot of the EBS volume before restart, attach it to another instance as avolume and then diagnose the issue.

- C. Streamall the data to Amazon Kinesis and then analyze the data in real time.

- D. Install Cloudwatch logs agent on the instance and send all the logs to Cloudwatch logs.

Answer: D

Explanation:

The AWS documentation mentions the following

You can publish log data from Amazon CC2 instances running Linux or Windows Server, and logged events from AWS CloudTrail. CloudWatch Logs can consume logs

from resources in any region, but you can only view the log data in the CloudWatch console in the regions where CloudWatch Logs is supported.

Option A is invalid as detailed monitoring will only help us to get more information about the performance metrics of the instances, volumes etc and will not be able to provide full information regarding technical issues.

Option B is incorrect if we had created a snapshot prior to the update it might be useful but not after the incident.

Option C is incorrect here we are dealing with an issue concerning the underlying application that handles the data so this solution will not help.

For more information on Cloudwatch logs, please refer to the below link:

• http://docs.aws.amazon.com/AmazonCloudWatch/latest/logs/StartTheCW LAgent.htm I

NEW QUESTION 14

You have a web application running on six Amazon EC2 instances, consuming about 45% of resources on each instance. You are using auto-scaling to make sure that six instances are running at all times. The number of requests this application processes is consistent and does not experience spikes. The application is critical to your business and you want high availability at all times. You want the load to be distributed evenly between all instances. You also want to use the same Amazon Machine Image (AMI) for all instances. Which of the following architectural choices should you make?

- A. Deploy6 EC2 instances in one availability zone and use Amazon Elastic Load Balancer.

- B. Deploy3 EC2 instances in one region and 3 in another region and use Amazon ElasticLoad Balancer.

- C. Deploy3 EC2 instances in one availability zone and 3 in another availability zone anduse Amazon Elastic Load Balancer.

- D. Deploy2 EC2 instances in three regions and use Amazon Elastic Load Balancer.

Answer: C

Explanation:

Option A is automatically incorrect because remember that the question asks for high availability. For option A, if the A2 goes down then the entire application fails.

For Option B and D, the CLB is designed to only run in one region in aws and not across multiple regions. So these options are wrong.

The right option is C.

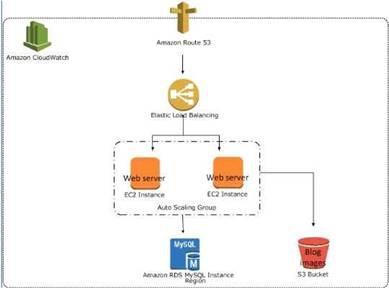

The below example shows an Elastic Loadbalancer connected to 2 EC2 instances connected via Auto Scaling. This is an example of an elastic and scalable web tier.

By scalable we mean that the Auto scaling process will increase or decrease the number of CC2 instances as required.

For more information on best practices for AWS Cloud applications, please visit the below URL:

• https://d03wsstatic.com/whitepapers/AWS_Cloud_Best_Practices.pdf

NEW QUESTION 15

You are a Devops Engineer for your company. You are responsible for creating Cloudformation templates for your company. There is a requirement to ensure that an S3 bucket is created for all

resources in development for logging purposes. How would you achieve this?

- A. Createseparate Cloudformation templates for Development and production.

- B. Createa parameter in the Cloudformation template and then use the Condition clause inthe template to create an S3 bucket if the parameter has a value of development

- C. Createan S3 bucket from before and then just provide access based on the tag valuementioned in the Cloudformation template

- D. Usethe metadata section in the Cloudformation template to decide on whether tocreate the S3 bucket or not.

Answer: B

Explanation:

The AWS Documentation mentions the following

You might use conditions when you want to reuse a template that can create resources in different contexts, such as a test environment versus a production environment In your template, you can add an CnvironmentType input parameter, which accepts either prod or test as inputs. For the production environment, you might include Amazon CC2 instances with certain capabilities; however, for the test environment, you want to use reduced capabilities to save money. With conditions, you can define which resources are created and how they're configured for each environment type.

For more information on Cloudformation conditions please visit the below url http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/cond itions-section- structure.htm I

NEW QUESTION 16

Your company has an application hosted on an Elastic beanstalk environment. You have been instructed that whenever application changes occur and new versions need to be deployed that the fastest deployment approach is employed. Which of the following deployment mechanisms will fulfil this requirement?

- A. Allatonce

- B. Rolling

- C. Immutable

- D. Rollingwith batch

Answer: A

Explanation:

The following table from the AWS documentation shows the deployment time for each deployment methods.

For more information on Elastic beanstalk deployments, please refer to the below link: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/using-features.deploy-existing- version, htm I

NEW QUESTION 17

Which of the following tools for EC2 can be used to administer instances without the need to SSH or RDP into the instance.

- A. AWSConfig

- B. AWSCodePipeline

- C. RunCommand

- D. EC2Config

Answer: C

Explanation:

You can use Run Command from the Amazon L~C2 console to configure instances without having to login to each instance

For more information on the Run Command, please visit the below URL:

• http://docs.aws.a mazon.com/systems-manager/latest/userguide/rc-console.html

NEW QUESTION 18

You need the absolute highest possible network performance for a cluster computing application. You already selected homogeneous instance types supporting 10 gigabit enhanced networking, made sure that your workload was network bound, and put the instances in a placement group. What is the last optimization you can make?

- A. Use 9001 MTU instead of 1500 for Jumbo Frames, to raise packet body to packet overhead ratios.

- B. Segregate the instances into different peered VPCs while keeping them all in a placement group, so each one has its own Internet Gateway.

- C. Bake an AMI for the instances and relaunch, so the instances are fresh in the placement group and do not have noisy neighbors.

- D. Turn off SYN/ACK on your TCP stack or begin using UDP for higher throughput.

Answer: A

Explanation:

Jumbo frames allow more than 1500 bytes of data by increasing the payload size per packet, and thus increasing the percentage of the packet that is not packet

overhead. Fewer packets are needed to send the same amount of usable data. However, outside of a given AWS region (CC2-Classic), a single VPC, or a VPC peering

connection, you will experience a maximum path of 1500 MTU. VPN connections and traffic sent over an Internet gateway are limited to 1500 MTU. If packets are over

1500 bytes, they are fragmented, or they are dropped if the Don't Fragment flag is set in the IP header.

For more information on Jumbo Frames, please visit the below URL: http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/network_mtu.htm#jumbo_frame_instance s

NEW QUESTION 19

You set up a web application development environment by using a third party configuration management tool to create a Docker container that is run on local developer machines.

What should you do to ensure that the web application and supporting network storage and security infrastructure does not impact your application after you deploy into AWS for staging and production environments?

- A. Writea script using the AWS SDK or CLI to deploy the application code from versioncontrol to the local development environments staging and production usingAWSOpsWorks.

- B. Definean AWS CloudFormation template to place your infrastructure into versioncontrol and use the same template to deploy the Docker container into ElasticBeanstalk for staging and production.

- C. Becausethe application is inside a Docker container, there are no infrastructuredifferences to be taken into account when moving from the local developmentenvironments to AWS for staging and production.

- D. Definean AWS CloudFormation template for each stage of the application deploymentlifecycle - development, staging and production -and have tagging in eachtemplate to define the environment.

Answer: B

Explanation:

Clastic Beanstalk supports the deployment of web applications from Docker containers. With Docker containers, you can define your own runtime environment. You can choose your own platform, programming language, and any application dependencies (such as package managers or tools), that aren't supported by other platforms. Docker containers are self-contained and include all the configuration information and software your web application requires to run.

By using Docker with Elastic Beanstalk, you have an infrastructure that automatically handles the details of capacity provisioning, load balancing, scaling, and application health monitoring.

This seems to be more appropriate than Option D.

◆ https://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker.htmI

For more information on Cloudformation best practises, please visit the link: http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/best-practices.htmI

NEW QUESTION 20

Your team wants to begin practicing continuous delivery using CloudFormation, to enable automated builds and deploys of whole, versioned stacks or stack layers. You have a 3-tier, mission-critical system. Which of the following is NOT a best practice for using CloudFormation in a continuous delivery environment?

- A. Use the AWS CloudFormation ValidateTemplate call before publishing changes to AWS.

- B. Model your stack in one template, so you can leverage CloudFormation's state management and dependency resolution to propagate all changes.

- C. Use CloudFormation to create brand new infrastructure for all stateless resources on each push, and run integration tests on that set of infrastructure.

- D. Parametrize the template and use Mappings to ensure your template works in multiple Regions.

Answer: B

Explanation:

Answer - B

Some of the best practices for Cloudformation are

• Created Nested stacks

As your infrastructure grows, common patterns can emerge in which you declare the same components in each of your templates. You can separate out these common components and create dedicated templates for them. That way, you can mix and match different templates but use nested stacks to create a single, unified stack. Nested stacks are stacks that create other stacks. To create nested stacks, use the AWS::CloudFormation::Stackresource in your template to reference other templates.

• Reuse Templates

After you have your stacks and resources set up, you can reuse your templates to replicate your infrastructure in multiple environments. For example, you can create environments for development, testing, and production so that you can test changes before implementing them into production. To make templates reusable, use the parameters, mappings, and conditions sections so that you can customize your stacks when you create them. For example, for your development environments, you can specify a lower-cost instance type compared to your production environment, but all other configurations and settings remain the same. For more information on Cloudformation best practises, please visit the below URL: http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/best-practices.html

NEW QUESTION 21

One of your engineers has written a web application in the Go Programming language and has asked your DevOps team to deploy it to AWS. The application code is hosted on a Git repository.

What are your options? (Select Two)

- A. Create a new AWS Elastic Beanstalk application and configure a Go environment to host your application, Using Git check out the latest version of the code, once the local repository for Elastic Beanstalk is configured use "eb create" command to create an environment and then use "eb deploy" command to deploy the application.

- B. Writea Dockerf ile that installs the Go base image and uses Git to fetch yourapplicatio

- C. Create a new AWS OpsWorks stack that contains a Docker layer thatuses the Dockerrun.aws.json file to deploy your container and then use theDockerfile to automate the deployment.

- D. Writea Dockerfile that installs the Go base image and fetches your application usingGit, Create a new AWS Elastic Beanstalk application and use this Dockerfile toautomate the deployment.

- E. Writea Dockerfile that installs the Go base image and fetches your application usingGit, Create anAWS CloudFormation template that creates and associates an AWS::EC2::lnstanceresource type with an AWS::EC2::Container resource type.

Answer: AC

Explanation:

Opsworks works with Chef recipes and not with Docker containers so Option B and C are invalid. There is no AWS::CC2::Container resource for Cloudformation so Option D is invalid.

Below is the documentation on Clastic beanstalk and Docker

Clastic Beanstalk supports the deployment of web applications from Docker containers. With Docker containers, you can define your own runtime environment. You can choose your own platform, programming language, and any application dependencies (such as package managers or tools), that aren't supported by other platforms. Docker containers are self-contained and include all the configuration information and software your web application requires to run.

For more information on Clastic beanstalk and Docker, please visit the link: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker.html

https://docs.aws.a mazon.com/elasticbeanstalk/latest/dg/eb-cl i3-getting-started.htmI https://docs^ws.amazon.com/elasticbeanstalk/latest/dg/eb3-cli-githtml

NEW QUESTION 22

You are a Devops Engineer for your company. There is a requirement to log each time an Instance is scaled in or scaled out from an existing Autoscaling Group. Which of the following steps can be implemented to fulfil this requirement. Each step forms part of the solution.

- A. Createa Lambda function which will write the event to Cloudwatch logs

- B. Createa Cloudwatch event which will trigger the Lambda function.

- C. Createan SQS queue which will write the event to Cloudwatch logs

- D. Createa Cloudwatch event which will trigger the SQS queue.

Answer: AB

Explanation:

The AWS documentation mentions the following

You can run an AWS Lambda function that logs an event whenever an Auto Scaling group launches or terminates an Amazon CC2 instance and whether the launch or terminate event was successful.

For more information on configuring lambda with Cloudwatch events for this scenario, please visit the URL:

◆ http://docs.aws.amazon.com/AmazonCloudWatch/latest/events/LogASGroupState.html

NEW QUESTION 23

......

Recommend!! Get the Full DOP-C01 dumps in VCE and PDF From Allfreedumps.com, Welcome to Download: https://www.allfreedumps.com/DOP-C01-dumps.html (New 116 Q&As Version)