Realistic Google Professional-Machine-Learning-Engineer Preparation Online

Examcollection offers free demo for Professional-Machine-Learning-Engineer exam. "Google Professional Machine Learning Engineer", also known as Professional-Machine-Learning-Engineer exam, is a Google Certification. This set of posts, Passing the Google Professional-Machine-Learning-Engineer exam, will help you answer those questions. The Professional-Machine-Learning-Engineer Questions & Answers covers all the knowledge points of the real exam. 100% real Google Professional-Machine-Learning-Engineer exams and revised by experts!

Online Google Professional-Machine-Learning-Engineer free dumps demo Below:

NEW QUESTION 1

You are an ML engineer in the contact center of a large enterprise. You need to build a sentiment analysis tool that predicts customer sentiment from recorded phone conversations. You need to identify the best approach to building a model while ensuring that the gender, age, and cultural differences of the customers who called the contact center do not impact any stage of the model development pipeline and results. What should you do?

- A. Extract sentiment directly from the voice recordings

- B. Convert the speech to text and build a model based on the words

- C. Convert the speech to text and extract sentiments based on the sentences

- D. Convert the speech to text and extract sentiment using syntactical analysis

Answer: C

NEW QUESTION 2

You developed an ML model with Al Platform, and you want to move it to production. You serve a few thousand queries per second and are experiencing latency issues. Incoming requests are served by a load balancer that distributes them across multiple Kubeflow CPU-only pods running on Google Kubernetes Engine (GKE). Your goal is to improve the serving latency without changing the underlying infrastructure. What should you do?

- A. Significantly increase the max_batch_size TensorFlow Serving parameter

- B. Switch to the tensorflow-model-server-universal version of TensorFlow Serving

- C. Significantly increase the max_enqueued_batches TensorFlow Serving parameter

- D. Recompile TensorFlow Serving using the source to support CPU-specific optimizations Instruct GKE to choose an appropriate baseline minimum CPU platform for serving nodes

Answer: A

NEW QUESTION 3

You work for a large hotel chain and have been asked to assist the marketing team in gathering predictions for a targeted marketing strategy. You need to make predictions about user lifetime value (LTV) over the next 30 days so that marketing can be adjusted accordingly. The customer dataset is in BigQuery, and you are preparing the tabular data for training with AutoML Tables. This data has a time signal that is spread across multiple columns. How should you ensure that AutoML fits the best model to your data?

- A. Manually combine all columns that contain a time signal into an array Allow AutoML to interpret this array appropriatelyChoose an automatic data split across the training, validation, and testing sets

- B. Submit the data for training without performing any manual transformations Allow AutoML to handle the appropriatetransformations Choose an automatic data split across the training, validation, and testing sets

- C. Submit the data for training without performing any manual transformations, and indicate an appropriate column as the Time column Allow AutoML to split your data based on the time signal provided, and reserve the more recent data for the validation and testing sets

- D. Submit the data for training without performing any manual transformations Use the columns that have a time signal to manually split your data Ensure that the data in your validation set is from 30 days after the data in your training set and that the data in your testing set is from 30 days after your validation set

Answer: D

NEW QUESTION 4

You are an ML engineer at a large grocery retailer with stores in multiple regions. You have been asked to create an inventory prediction model. Your models features include region, location, historical demand, and seasonal popularity. You want the algorithm to learn from new inventory data on a daily basis. Which algorithms should you use to build the model?

- A. Classification

- B. Reinforcement Learning

- C. Recurrent Neural Networks (RNN)

- D. Convolutional Neural Networks (CNN)

Answer: B

NEW QUESTION 5

You are an ML engineer at a global shoe store. You manage the ML models for the company's website. You are asked to build a model that will recommend new products to the user based on their purchase behavior and similarity with other users. What should you do?

- A. Build a classification model

- B. Build a knowledge-based filtering model

- C. Build a collaborative-based filtering model

- D. Build a regression model using the features as predictors

Answer: C

NEW QUESTION 6

You are developing a Kubeflow pipeline on Google Kubernetes Engine. The first step in the pipeline is to issue a query against BigQuery. You plan to use the results of that query as the input to the next step in your pipeline. You want to achieve this in the easiest way possible. What should you do?

- A. Use the BigQuery console to execute your query and then save the query results Into a new BigQuery table.

- B. Write a Python script that uses the BigQuery API to execute queries against BigQuery Execute this script as the first step in your Kubeflow pipeline

- C. Use the Kubeflow Pipelines domain-specific language to create a custom component that uses the Python BigQuery client library to execute queries

- D. Locate the Kubeflow Pipelines repository on GitHub Find the BigQuery Query Component, copy that component's URL, and use it to load the component into your pipelin

- E. Use the component to execute queries against BigQuery

Answer: A

NEW QUESTION 7

You work on a growing team of more than 50 data scientists who all use Al Platform. You are designing a strategy to organize your jobs, models, and versions in a clean and scalable way. Which strategy should you choose?

- A. Set up restrictive I AM permissions on the Al Platform notebooks so that only a single user or group can access a given instance.

- B. Separate each data scientist's work into a different project to ensure that the jobs, models, and versions created by each data scientist are accessible only to that user.

- C. Use labels to organize resources into descriptive categorie

- D. Apply a label to each created resource so that users can filter the results by label when viewing or monitoring the resources

- E. Set up a BigQuery sink for Cloud Logging logs that is appropriately filtered to capture information about Al Platform resource usage In BigQuery create a SQL view that maps users to the resources they are using.

Answer: B

NEW QUESTION 8

You are responsible for building a unified analytics environment across a variety of on-premises data marts. Your company is experiencing data quality and security challenges when integrating data across the servers, caused by the use of a wide range of disconnected tools and temporary solutions. You need a fully managed, cloud-native data integration service that will lower the total cost of work and reduce repetitive work. Some members on your team prefer a codeless interface for building Extract, Transform, Load (ETL) process. Which service should you use?

- A. Dataflow

- B. Dataprep

- C. Apache Flink

- D. Cloud Data Fusion

Answer: D

NEW QUESTION 9

You work for a toy manufacturer that has been experiencing a large increase in demand. You need to build an ML model to reduce the amount of time spent by quality control inspectors checking for product defects. Faster defect detection is a priority. The factory does not have reliable Wi-Fi. Your company wants to implement the new ML model as soon as possible. Which model should you use?

- A. AutoML Vision model

- B. AutoML Vision Edge mobile-versatile-1 model

- C. AutoML Vision Edge mobile-low-latency-1 model

- D. AutoML Vision Edge mobile-high-accuracy-1 model

Answer: A

NEW QUESTION 10

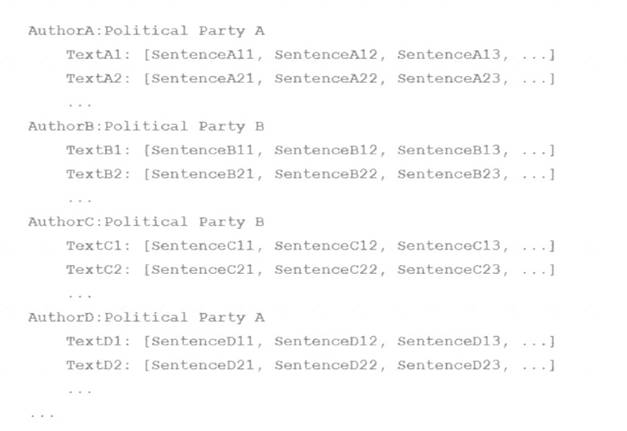

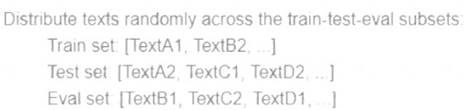

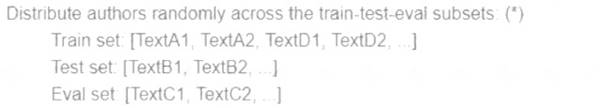

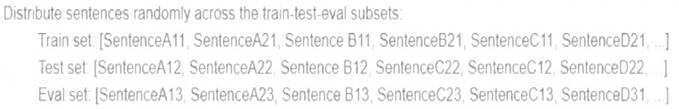

Your team is working on an NLP research project to predict political affiliation of authors based on articles they have written. You have a large training dataset that is structured like this:

A)

B)

C)

D)

- A. Option A

- B. Option B

- C. Option C

- D. Option D

Answer: D

NEW QUESTION 11

You are an ML engineer at a bank that has a mobile application. Management has asked you to build an ML-based biometric authentication for the app that verifies a customer's identity based on their fingerprint. Fingerprints are considered highly sensitive personal information and cannot be downloaded and stored into the bank databases. Which learning strategy should you recommend to train and deploy this ML model?

- A. Differential privacy

- B. Federated learning

- C. MD5 to encrypt data

- D. Data Loss Prevention API

Answer: B

NEW QUESTION 12

You manage a team of data scientists who use a cloud-based backend system to submit training jobs. This system has become very difficult to administer, and you want to use a managed service instead. The data scientists you work with use many different frameworks, including Keras, PyTorch, theano. Scikit-team, and custom libraries. What should you do?

- A. Use the Al Platform custom containers feature to receive training jobs using any framework

- B. Configure Kubeflow to run on Google Kubernetes Engine and receive training jobs through TFJob

- C. Create a library of VM images on Compute Engine; and publish these images on a centralized repository

- D. Set up Slurm workload manager to receive jobs that can be scheduled to run on your cloud infrastructure.

Answer: D

NEW QUESTION 13

You have a demand forecasting pipeline in production that uses Dataflow to preprocess raw data prior to model training and prediction. During preprocessing, you employ Z-score normalization on data stored in BigQuery and write it back to BigQuery. New training data is added every week. You want to make the process more efficient by minimizing computation time and manual intervention. What should you do?

- A. Normalize the data using Google Kubernetes Engine

- B. Translate the normalization algorithm into SQL for use with BigQuery

- C. Use the normalizer_fn argument in TensorFlow's Feature Column API

- D. Normalize the data with Apache Spark using the Dataproc connector for BigQuery

Answer: B

NEW QUESTION 14

Your team needs to build a model that predicts whether images contain a driver's license, passport, or credit card. The data engineering team already built the pipeline and generated a dataset composed of 10,000 images with driver's licenses, 1,000 images with passports, and 1,000 images with credit cards. You now have to train a model with the following label map: ['driversjicense', 'passport', 'credit_card']. Which loss function should you use?

- A. Categorical hinge

- B. Binary cross-entropy

- C. Categorical cross-entropy

- D. Sparse categorical cross-entropy

Answer: B

NEW QUESTION 15

As the lead ML Engineer for your company, you are responsible for building ML models to digitize scanned customer forms. You have developed a TensorFlow model that converts the scanned images into text and stores them in Cloud Storage. You need to use your ML model on the aggregated data collected at the end of each day with minimal manual intervention. What should you do?

- A. Use the batch prediction functionality of Al Platform

- B. Create a serving pipeline in Compute Engine for prediction

- C. Use Cloud Functions for prediction each time a new data point is ingested

- D. Deploy the model on Al Platform and create a version of it for online inference.

Answer: D

NEW QUESTION 16

You have written unit tests for a Kubeflow Pipeline that require custom libraries. You want to automate the execution of unit tests with each new push to your development branch in Cloud Source Repositories. What should you do?

- A. Write a script that sequentially performs the push to your development branch and executes the unit tests on Cloud Run

- B. Using Cloud Build, set an automated trigger to execute the unit tests when changes are pushed to your development branch.

- C. Set up a Cloud Logging sink to a Pub/Sub topic that captures interactions with Cloud Source Repositories Configure a Pub/Sub trigger for Cloud Run, and execute the unit tests on Cloud Run.

- D. Set up a Cloud Logging sink to a Pub/Sub topic that captures interactions with Cloud Source Repositorie

- E. Execute the unit tests using a Cloud Function that is triggered when messages are sent to the Pub/Sub topic

Answer: B

NEW QUESTION 17

You have a functioning end-to-end ML pipeline that involves tuning the hyperparameters of your ML model using Al Platform, and then using the best-tuned parameters for training. Hypertuning is taking longer than expected and is delaying the downstream processes. You want to speed up the tuning job without significantly compromising its effectiveness. Which actions should you take?

Choose 2 answers

- A. Decrease the number of parallel trials

- B. Decrease the range of floating-point values

- C. Set the early stopping parameter to TRUE

- D. Change the search algorithm from Bayesian search to random search.

- E. Decrease the maximum number of trials during subsequent training phases.

Answer: DE

NEW QUESTION 18

You recently joined a machine learning team that will soon release a new project. As a lead on the project, you are asked to determine the production readiness of the ML components. The team has already tested features and data, model development, and infrastructure. Which additional readiness check should you recommend to the team?

- A. Ensure that training is reproducible

- B. Ensure that all hyperparameters are tuned

- C. Ensure that model performance is monitored

- D. Ensure that feature expectations are captured in the schema

Answer: B

NEW QUESTION 19

......

P.S. Dumps-hub.com now are offering 100% pass ensure Professional-Machine-Learning-Engineer dumps! All Professional-Machine-Learning-Engineer exam questions have been updated with correct answers: https://www.dumps-hub.com/Professional-Machine-Learning-Engineer-dumps.html (60 New Questions)